Autoencoder-based Semantic Novelty Detection: Towards Dependable AI-based Systems

Paper and Code

Aug 25, 2021

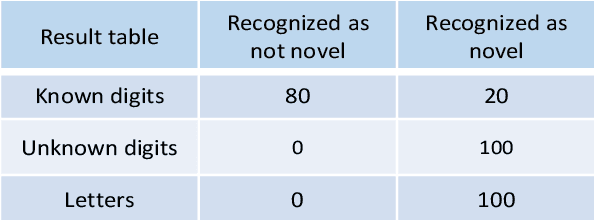

Many autonomous systems, such as driverless taxis, perform safety critical functions. Autonomous systems employ artificial intelligence (AI) techniques, specifically for the environment perception. Engineers cannot completely test or formally verify AI-based autonomous systems. The accuracy of AI-based systems depends on the quality of training data. Thus, novelty detection - identifying data that differ in some respect from the data used for training - becomes a safety measure for system development and operation. In this paper, we propose a new architecture for autoencoder-based semantic novelty detection with two innovations: architectural guidelines for a semantic autoencoder topology and a semantic error calculation as novelty criteria. We demonstrate that such a semantic novelty detection outperforms autoencoder-based novelty detection approaches known from literature by minimizing false negatives.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge