AutoBayes: Automated Inference via Bayesian Graph Exploration for Nuisance-Robust Biosignal Analysis

Paper and Code

Jul 02, 2020

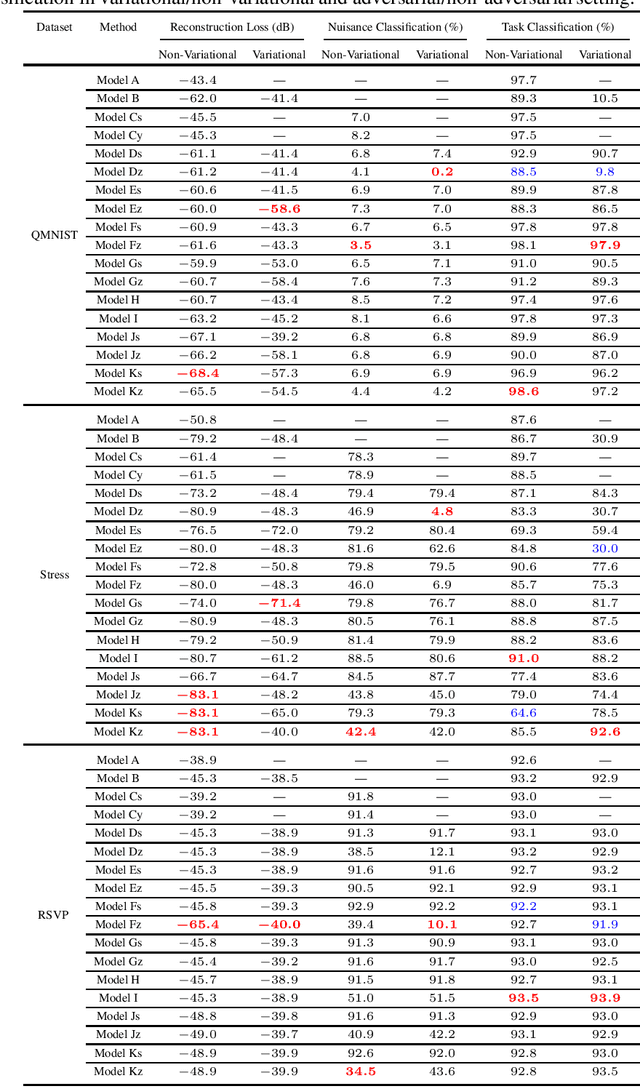

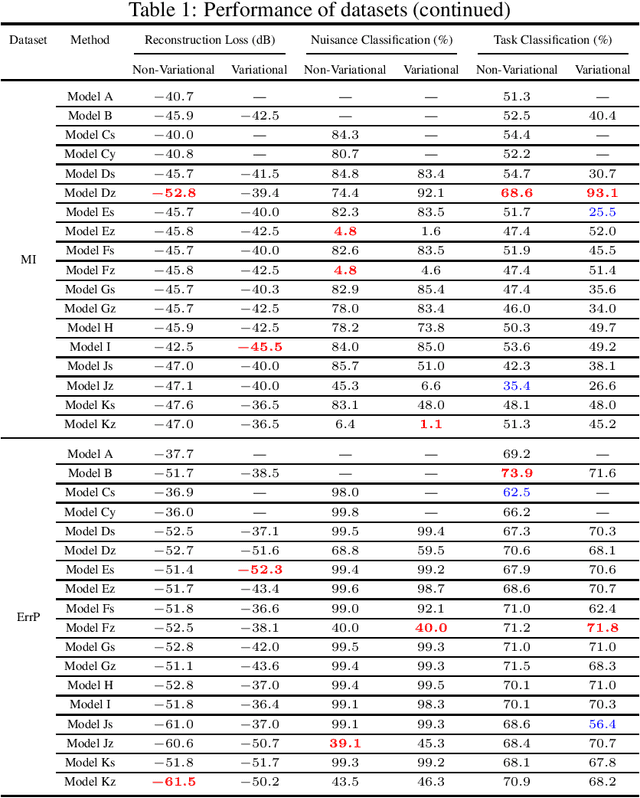

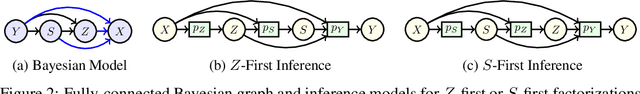

Learning data representations that capture task-related features, but are invariant to nuisance variations remains a key challenge in machine learning, in particular for biosignal processing. We introduce an automated Bayesian inference framework, called AutoBayes, that explores different graphical models linking classifier, encoder, decoder, estimator and adversary network blocks to optimize nuisance-invariant machine learning pipelines. AutoBayes also enables justifying disentangled representation, which splits the latent variable into multiple pieces to impose different relation with subject/session-variation and task labels. We benchmark the framework on a series of physiological datasets, where we have access to subject and class labels during training, and provide analysis of its capability for subject transfer learning with/without variational modeling and adversarial training. The framework can be effectively utilized in semi-supervised multi-class classification, and reconstruction tasks for datasets in different domains as well.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge