Attention based Occlusion Removal for Hybrid Telepresence Systems

Paper and Code

Dec 02, 2021

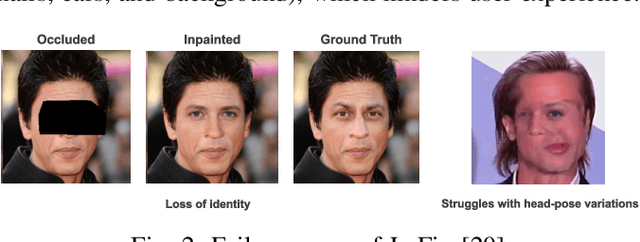

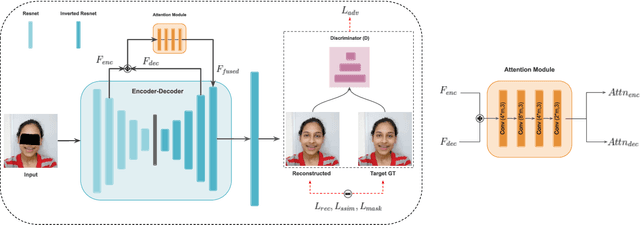

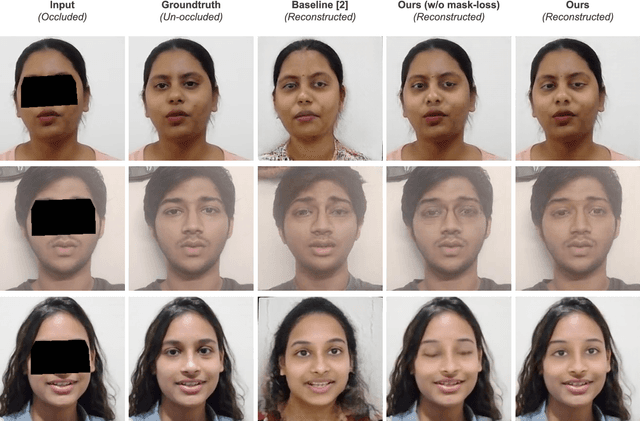

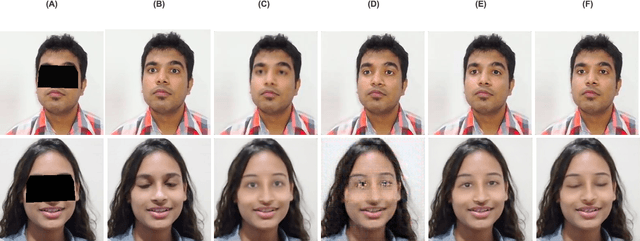

Traditionally, video conferencing is a widely adopted solution for telecommunication, but a lack of immersiveness comes inherently due to the 2D nature of facial representation. The integration of Virtual Reality (VR) in a communication/telepresence system through Head Mounted Displays (HMDs) promises to provide users a much better immersive experience. However, HMDs cause hindrance by blocking the facial appearance and expressions of the user. To overcome these issues, we propose a novel attention-enabled encoder-decoder architecture for HMD de-occlusion. We also propose to train our person-specific model using short videos (1-2 minutes) of the user, captured in varying appearances, and demonstrated generalization to unseen poses and appearances of the user. We report superior qualitative and quantitative results over state-of-the-art methods. We also present applications of this approach to hybrid video teleconferencing using existing animation and 3D face reconstruction pipelines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge