Asynchronous Online Federated Learning for Edge Devices

Paper and Code

Nov 05, 2019

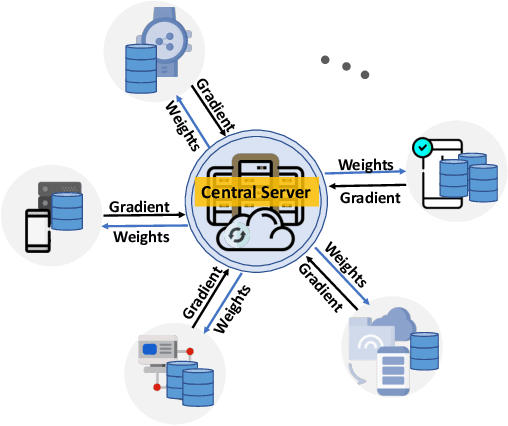

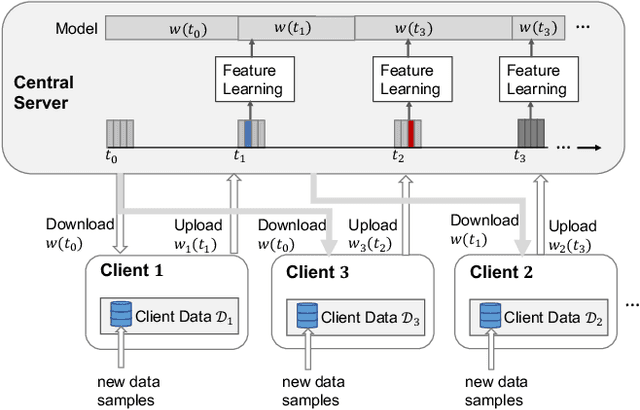

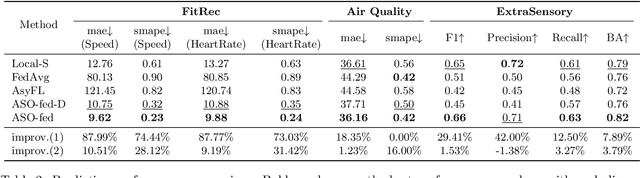

Federated learning (FL) is a machine learning paradigm where a shared central model is learned across multiple distributed client devices while the training data remains on edge devices or local clients. Most prior work on federated learning uses Federated Averaging (FedAvg) as an optimization method for training in a synchronized fashion. This involves independent training at multiple edge devices with synchronous aggregation steps. However, the assumptions made by FedAvg are not realistic given the heterogeneity of devices. In particular, the volume and distribution of collected data vary in the training process due to different sampling rates of edge devices. The edge devices themselves also vary in their available communication bandwidth and system configurations, such as memory, processor speed, and power requirements. This leads to vastly different training times as well as model/data transfer times. Furthermore, availability issues at edge devices can lead to a lack of contribution from specific edge devices to the federated model. In this paper, we present an Asynchronous Online Federated Learning (ASO- fed) framework, where the edge devices perform online learning with continuous streaming local data and a central server aggregates model parameters from local clients. Our framework updates the central model in an asynchronous manner to tackle the challenges associated with both varying computational loads at heterogeneous edge devices and edge devices that lag behind or dropout. Experiments on three real-world datasets show the effectiveness of ASO-fed on lowering the overall training cost and maintaining good prediction performance.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge