Argumentative Reward Learning: Reasoning About Human Preferences

Paper and Code

Sep 28, 2022

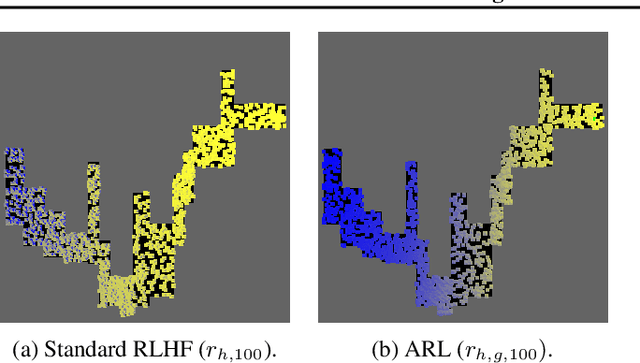

We define a novel neuro-symbolic framework, argumentative reward learning, which combines preference-based argumentation with existing approaches to reinforcement learning from human feedback. Our method improves prior work by generalising human preferences, reducing the burden on the user and increasing the robustness of the reward model. We demonstrate this with a number of experiments.

* 4 pages, ICML HMCaT workshop

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge