Are Eliminated Spans Useless for Coreference Resolution? Not at all

Paper and Code

Jan 04, 2021

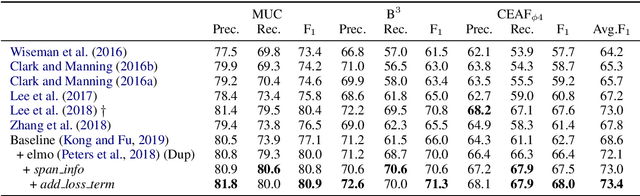

Various neural-based methods have been proposed so far for joint mention detection and coreference resolution. However, existing works on coreference resolution are mainly dependent on filtered mention representation, while other spans are largely neglected. In this paper, we aim at increasing the utilization rate of data and investigating whether those eliminated spans are totally useless, or to what extent they can improve the performance of coreference resolution. To achieve this, we propose a mention representation refining strategy where spans highly related to mentions are well leveraged using a pointer network for representation enhancing. Notably, we utilize an additional loss term in this work to encourage the diversity between entity clusters. Experimental results on the document-level CoNLL-2012 Shared Task English dataset show that eliminated spans are indeed much effective and our approach can achieve competitive results when compared with previous state-of-the-art in coreference resolution.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge