Approximation of Smoothness Classes by Deep ReLU Networks

Paper and Code

Jul 30, 2020

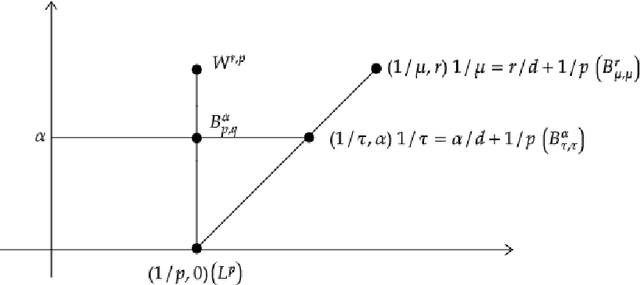

We consider approximation rates of sparsely connected deep rectified linear unit (ReLU) and rectified power unit (RePU) neural networks for functions in Besov spaces $B^\alpha_{q}(L^p)$ in arbitrary dimension $d$, on bounded or unbounded domains. We show that RePU networks with a fixed activation function attain optimal approximation rates for functions in the Besov space $B^\alpha_{\tau}(L^\tau)$ on the critical embedding line $1/\tau=\alpha/d+1/p$ for arbitrary smoothness order $\alpha>0$. Moreover, we show that ReLU networks attain near to optimal rates for any Besov space strictly above the critical line. Using interpolation theory, this implies that the entire range of smoothness classes at or above the critical line is (near to) optimally approximated by deep ReLU/RePU networks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge