Application of the NIST AI Risk Management Framework to Surveillance Technology

Paper and Code

Mar 22, 2024

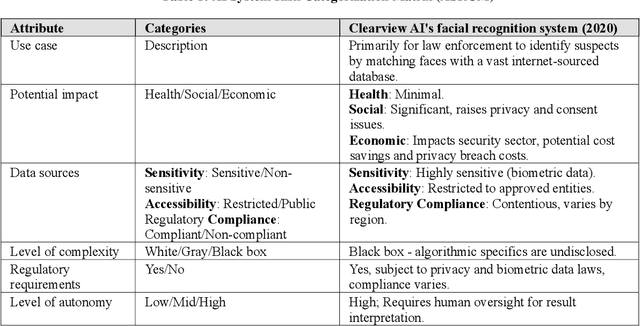

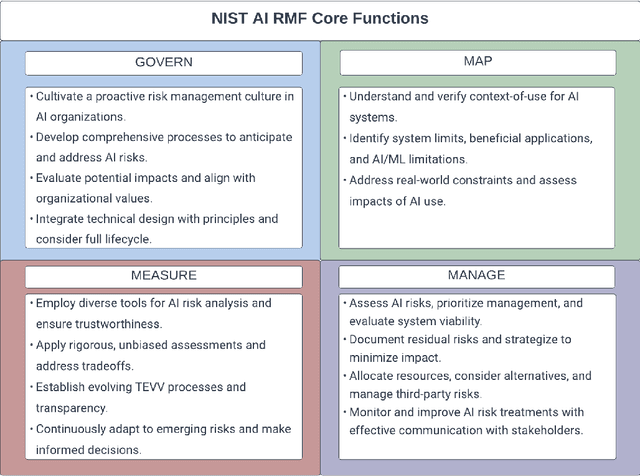

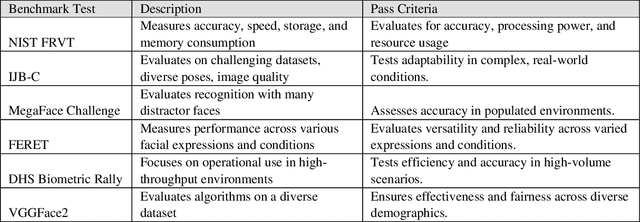

This study offers an in-depth analysis of the application and implications of the National Institute of Standards and Technology's AI Risk Management Framework (NIST AI RMF) within the domain of surveillance technologies, particularly facial recognition technology. Given the inherently high-risk and consequential nature of facial recognition systems, our research emphasizes the critical need for a structured approach to risk management in this sector. The paper presents a detailed case study demonstrating the utility of the NIST AI RMF in identifying and mitigating risks that might otherwise remain unnoticed in these technologies. Our primary objective is to develop a comprehensive risk management strategy that advances the practice of responsible AI utilization in feasible, scalable ways. We propose a six-step process tailored to the specific challenges of surveillance technology that aims to produce a more systematic and effective risk management practice. This process emphasizes continual assessment and improvement to facilitate companies in managing AI-related risks more robustly and ensuring ethical and responsible deployment of AI systems. Additionally, our analysis uncovers and discusses critical gaps in the current framework of the NIST AI RMF, particularly concerning its application to surveillance technologies. These insights contribute to the evolving discourse on AI governance and risk management, highlighting areas for future refinement and development in frameworks like the NIST AI RMF.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge