An Objective for Hierarchical Clustering in Euclidean Space and its Connection to Bisecting K-means

Paper and Code

Aug 30, 2020

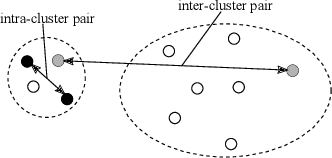

This paper explores hierarchical clustering in the case where pairs of points have dissimilarity scores (e.g. distances) as a part of the input. The recently introduced objective for points with dissimilarity scores results in every tree being a 1/2 approximation if the distances form a metric. This shows the objective does not make a significant distinction between a good and poor hierarchical clustering in metric spaces. Motivated by this, the paper develops a new global objective for hierarchical clustering in Euclidean space. The objective captures the criterion that has motivated the use of divisive clustering algorithms: that when a split happens, points in the same cluster should be more similar than points in different clusters. Moreover, this objective gives reasonable results on ground-truth inputs for hierarchical clustering. The paper builds a theoretical connection between this objective and the bisecting k-means algorithm. This paper proves that the optimal 2-means solution results in a constant approximation for the objective. This is the first paper to show the bisecting k-means algorithm optimizes a natural global objective over the entire tree.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge