Amortized Variational Deep Q Network

Paper and Code

Nov 03, 2020

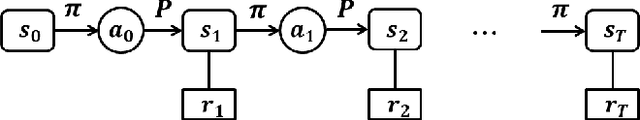

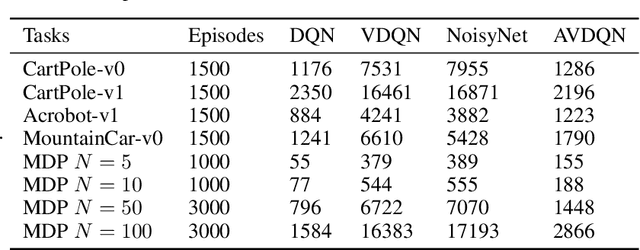

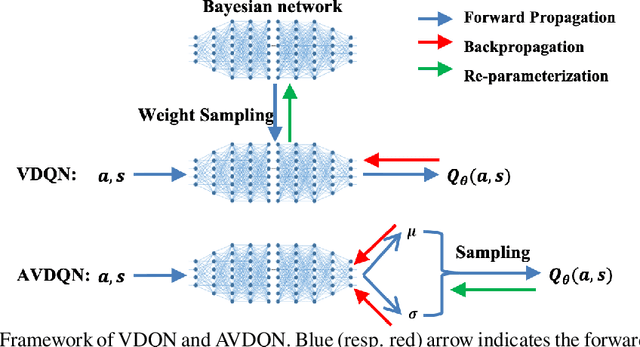

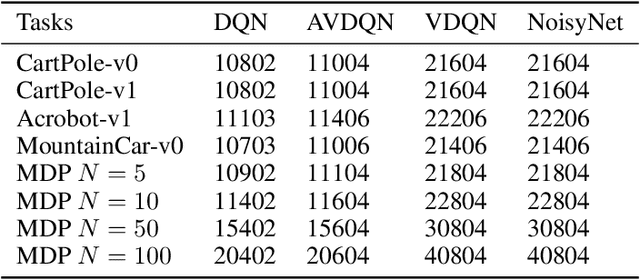

Efficient exploration is one of the most important issues in deep reinforcement learning. To address this issue, recent methods consider the value function parameters as random variables, and resort variational inference to approximate the posterior of the parameters. In this paper, we propose an amortized variational inference framework to approximate the posterior distribution of the action value function in Deep Q Network. We establish the equivalence between the loss of the new model and the amortized variational inference loss. We realize the balance of exploration and exploitation by assuming the posterior as Cauchy and Gaussian, respectively in a two-stage training process. We show that the amortized framework can results in significant less learning parameters than existing state-of-the-art method. Experimental results on classical control tasks in OpenAI Gym and chain Markov Decision Process tasks show that the proposed method performs significantly better than state-of-art methods and requires much less training time.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge