Amended Cross Entropy Cost: Framework For Explicit Diversity Encouragement

Paper and Code

Jul 16, 2020

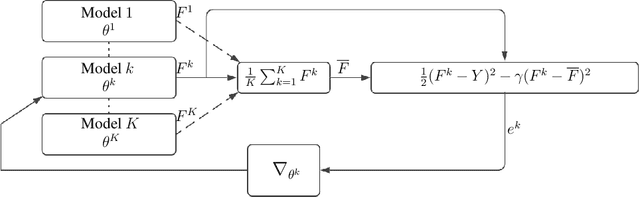

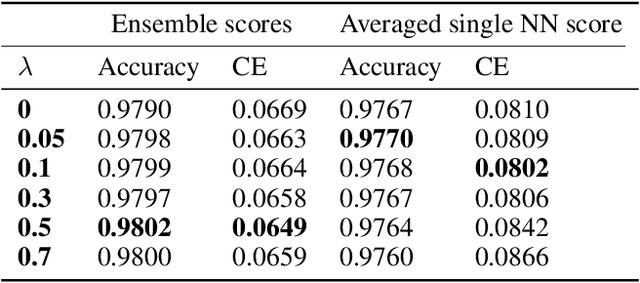

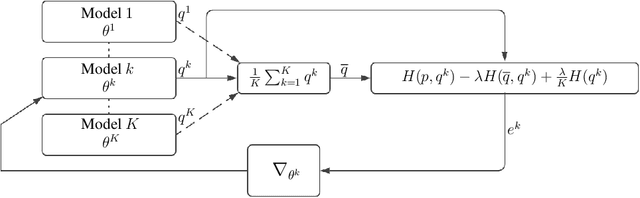

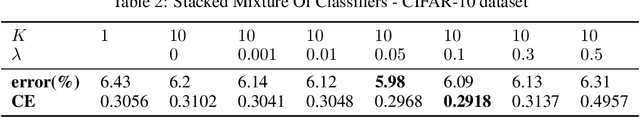

Cross Entropy (CE) has an important role in machine learning and, in particular, in neural networks. It is commonly used in neural networks as the cost between the known distribution of the label and the Softmax/Sigmoid output. In this paper we present a new cost function called the Amended Cross Entropy (ACE). Its novelty lies in its affording the capability to train multiple classifiers while explicitly controlling the diversity between them. We derived the new cost by mathematical analysis and "reverse engineering" of the way we wish the gradients to behave, and produced a tailor-made, elegant and intuitive cost function to achieve the desired result. This process is similar to the way that CE cost is picked as a cost function for the Softmax/Sigmoid classifiers for obtaining linear derivatives. By choosing the optimal diversity factor we produce an ensemble which yields better results than the vanilla one. We demonstrate two potential usages of this outcome, and present empirical results. Our method works for classification problems analogously to Negative Correlation Learning (NCL) for regression problems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge