AI for human assessment: What do professional assessors need?

Paper and Code

Apr 18, 2022

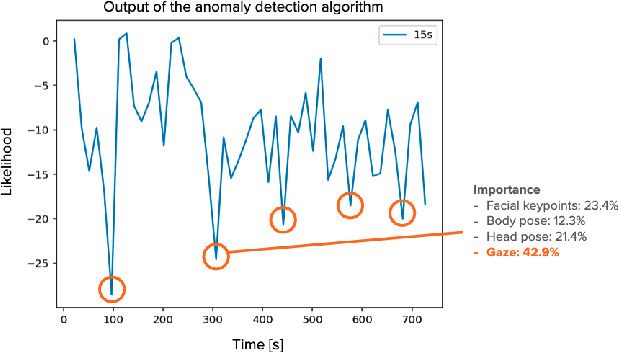

We present our case study that aims to help professional assessors make decisions in human assessment, in which they conduct interviews with assessees and evaluate their suitability for certain job roles. Our workshop with two industrial assessors revealed that a computational system that can extract nonverbal cues of assesses from interview videos would be beneficial to assessors in terms of supporting their decision making. In response, we developed such a system based on an unsupervised anomaly detection algorithm using multimodal behavioral features such as facial keypoints, pose, head pose, and gaze. Moreover, we enabled the system to output how much each feature contributed to the outlierness of the detected cues with the purpose of enhancing its interpretability. We then conducted a preliminary study to examine the validity of the system's output by using 20 actual assessment interview videos and involving the two assessors. The results suggested the advantages of using unsupervised anomaly detection in an interpretable manner by illustrating the informativeness of its outputs for assessors. Our approach, which builds on top of the idea of separation of observation and interpretation in human-AI teaming, will facilitate human decision making in highly contextual domains, such as human assessment, while keeping their trust in the system.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge