Adversarial-Prediction Guided Multi-task Adaptation for Semantic Segmentation of Electron Microscopy Images

Paper and Code

Apr 05, 2020

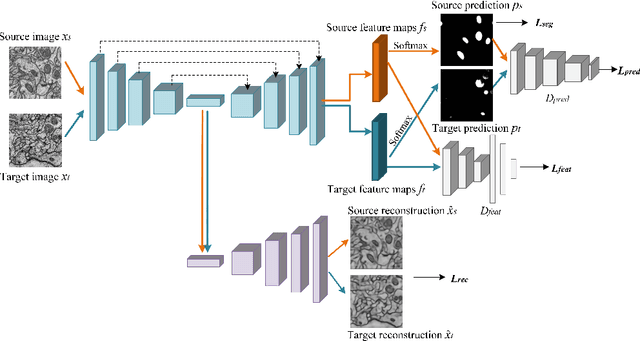

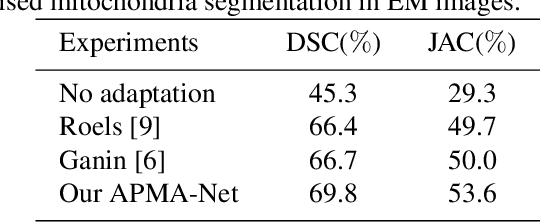

Semantic segmentation is an essential step for electron microscopy (EM) image analysis. Although supervised models have achieved significant progress, the need for labor intensive pixel-wise annotation is a major limitation. To complicate matters further, supervised learning models may not generalize well on a novel dataset due to domain shift. In this study, we introduce an adversarial-prediction guided multi-task network to learn the adaptation of a well-trained model for use on a novel unlabeled target domain. Since no label is available on target domain, we learn an encoding representation not only for the supervised segmentation on source domain but also for unsupervised reconstruction of the target data. To improve the discriminative ability with geometrical cues, we further guide the representation learning by multi-level adversarial learning in semantic prediction space. Comparisons and ablation study on public benchmark demonstrated state-of-the-art performance and effectiveness of our approach.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge