Adversarial Image Registration with Application for MR and TRUS Image Fusion

Paper and Code

Oct 01, 2018

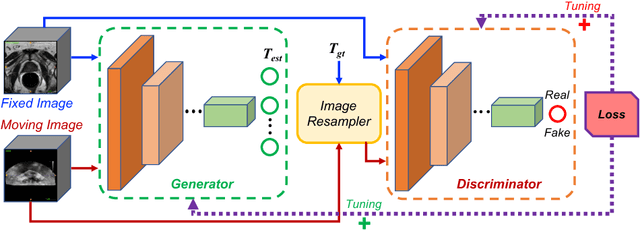

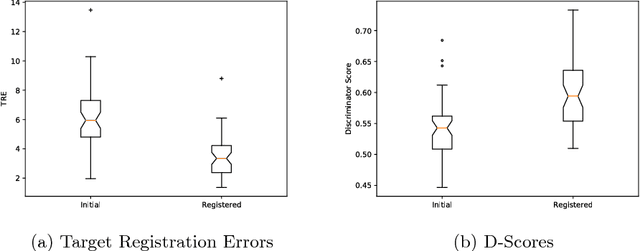

Robust and accurate alignment of multimodal medical images is a very challenging task, which however is very useful for many clinical applications. For example, magnetic resonance (MR) and transrectal ultrasound (TRUS) image registration is a critical component in MR-TRUS fusion guided prostate interventions. However, due to the huge difference between the image appearances and the large variation in image correspondence, MR-TRUS image registration is a very challenging problem. In this paper, an adversarial image registration (AIR) framework is proposed. By training two deep neural networks simultaneously, one being a generator and the other being a discriminator, we can obtain not only a network for image registration, but also a metric network which can help evaluate the quality of image registration. The developed AIR-net is then evaluated using clinical datasets acquired through image-fusion guided prostate biopsy procedures and promising results are demonstrated.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge