Adversarial Attacks on Brain-Inspired Hyperdimensional Computing-Based Classifiers

Paper and Code

Jun 10, 2020

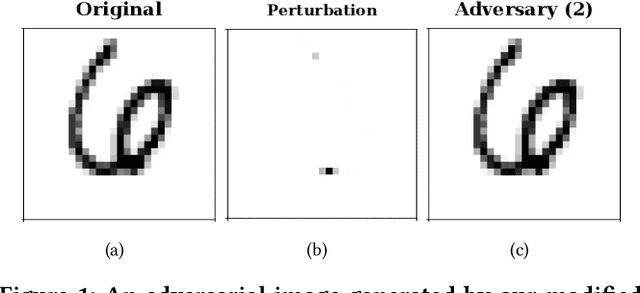

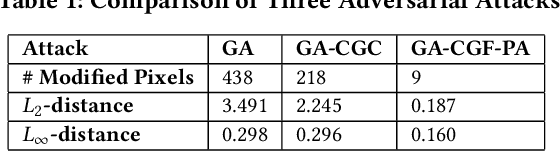

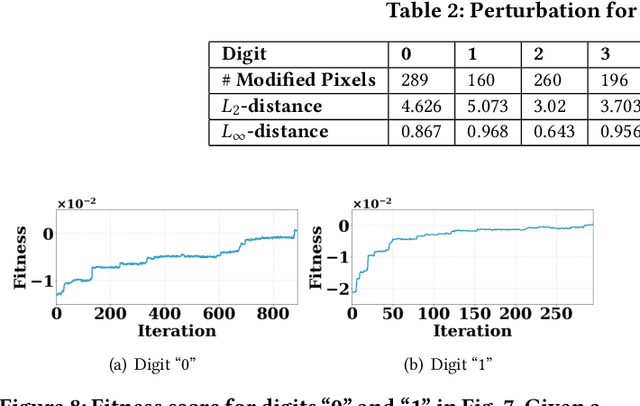

Being an emerging class of in-memory computing architecture, brain-inspired hyperdimensional computing (HDC) mimics brain cognition and leverages random hypervectors (i.e., vectors with a dimensionality of thousands or even more) to represent features and to perform classification tasks. The unique hypervector representation enables HDC classifiers to exhibit high energy efficiency, low inference latency and strong robustness against hardware-induced bit errors. Consequently, they have been increasingly recognized as an appealing alternative to or even replacement of traditional deep neural networks (DNNs) for local on device classification, especially on low-power Internet of Things devices. Nonetheless, unlike their DNN counterparts, state-of-the-art designs for HDC classifiers are mostly security-oblivious, casting doubt on their safety and immunity to adversarial inputs. In this paper, we study for the first time adversarial attacks on HDC classifiers and highlight that HDC classifiers can be vulnerable to even minimally-perturbed adversarial samples. Concretely, using handwritten digit classification as an example, we construct a HDC classifier and formulate a grey-box attack problem, where an attacker's goal is to mislead the target HDC classifier to produce erroneous prediction labels while keeping the amount of added perturbation noise as little as possible. Then, we propose a modified genetic algorithm to generate adversarial samples within a reasonably small number of queries. Our results show that adversarial images generated by our algorithm can successfully mislead the HDC classifier to produce wrong prediction labels with a high probability (i.e., 78% when the HDC classifier uses a fixed majority rule for decision). Finally, we also present two defense strategies -- adversarial training and retraining-- to strengthen the security of HDC classifiers.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge