Adjoint Rigid Transform Network: Self-supervised Alignment of 3D Shapes

Paper and Code

Feb 01, 2021

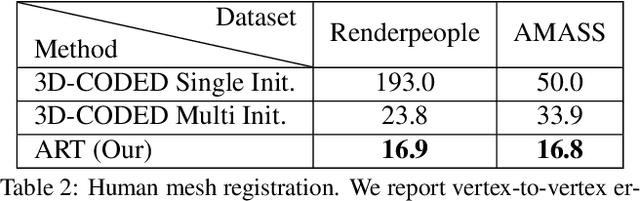

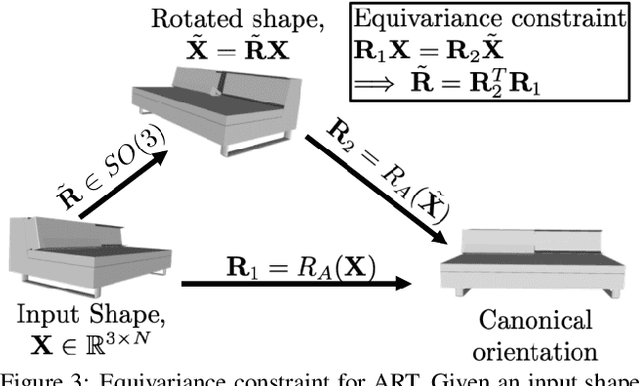

Most learning methods for 3D data (point clouds, meshes) suffer significant performance drops when the data is not carefully aligned to a canonical orientation. Aligning real world 3D data collected from different sources is non-trivial and requires manual intervention. In this paper, we propose the Adjoint Rigid Transform (ART) Network, a neural module which can be integrated with existing 3D networks to significantly boost their performance in tasks such as shape reconstruction, non-rigid registration, and latent disentanglement. ART learns to rotate input shapes to a canonical orientation that is crucial for a lot of tasks. ART achieves this by imposing rotation equivariance constraint on input shapes. The remarkable result is that with only self-supervision, ART can discover a unique canonical orientation for both rigid and nonrigid objects, which leads to a notable boost in downstream task performance. We will release our code and pre-trained models for further research.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge