Active Distribution Learning from Indirect Samples

Paper and Code

Aug 16, 2018

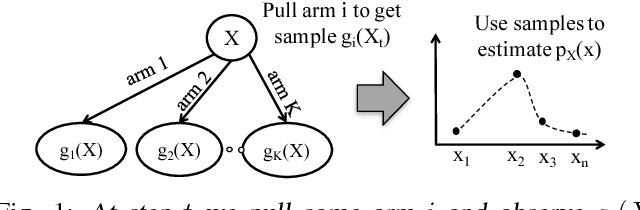

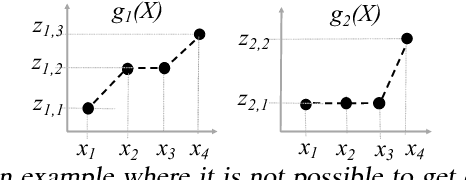

This paper studies the problem of {\em learning} the probability distribution $P_X$ of a discrete random variable $X$ using indirect and sequential samples. At each time step, we choose one of the possible $K$ functions, $g_1, \ldots, g_K$ and observe the corresponding sample $g_i(X)$. The goal is to estimate the probability distribution of $X$ by using a minimum number of such sequential samples. This problem has several real-world applications including inference under non-precise information and privacy-preserving statistical estimation. We establish necessary and sufficient conditions on the functions $g_1, \ldots, g_K$ under which asymptotically consistent estimation is possible. We also derive lower bounds on the estimation error as a function of total samples and show that it is order-wise achievable. Leveraging these results, we propose an iterative algorithm that i) chooses the function to observe at each step based on past observations; and ii) combines the obtained samples to estimate $p_X$. The performance of this algorithm is investigated numerically under various scenarios, and shown to outperform baseline approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge