ActionSpotter: Deep Reinforcement Learning Framework for Temporal Action Spotting in Videos

Paper and Code

Apr 15, 2020

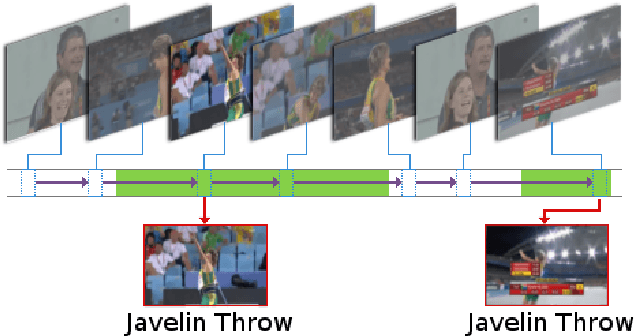

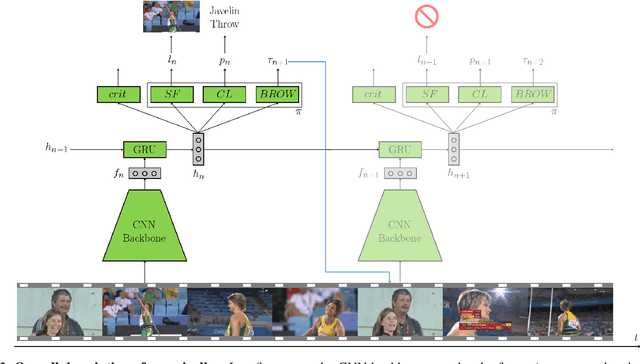

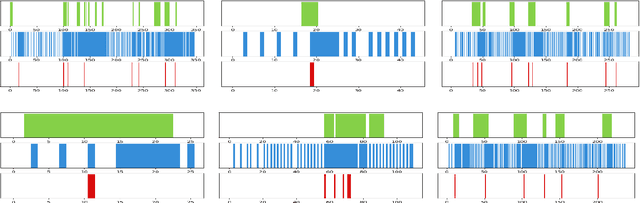

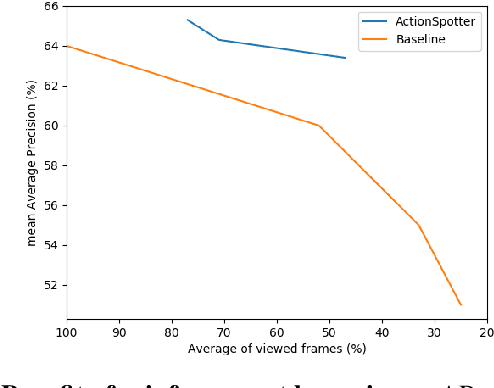

Summarizing video content is an important task in many applications. This task can be defined as the computation of the ordered list of actions present in a video. Such a list could be extracted using action detection algorithms. However, it is not necessary to determine the temporal boundaries of actions to know their existence. Moreover, localizing precise boundaries usually requires dense video analysis to be effective. In this work, we propose to directly compute this ordered list by sparsely browsing the video and selecting one frame per action instance, task known as action spotting in literature. To do this, we propose ActionSpotter, a spotting algorithm that takes advantage of Deep Reinforcement Learning to efficiently spot actions while adapting its video browsing speed, without additional supervision. Experiments performed on datasets THUMOS14 and ActivityNet show that our framework outperforms state of the art detection methods. In particular, the spotting mean Average Precision on THUMOS14 is significantly improved from 59.7% to 65.6% while skipping 23% of video.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge