Action-GPT: Leveraging Large-scale Language Models for Improved and Generalized Zero Shot Action Generation

Paper and Code

Nov 30, 2022

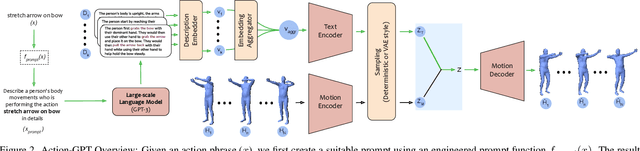

We introduce Action-GPT, a plug and play framework for incorporating Large Language Models (LLMs) into text-based action generation models. Action phrases in current motion capture datasets contain minimal and to-the-point information. By carefully crafting prompts for LLMs, we generate richer and fine-grained descriptions of the action. We show that utilizing these detailed descriptions instead of the original action phrases leads to better alignment of text and motion spaces. Our experiments show qualitative and quantitative improvement in the quality of synthesized motions produced by recent text-to-motion models. Code, pretrained models and sample videos will be made available at https://actiongpt.github.io

* WIP. Code, pretrained models and sample videos will be made available

at \url{https://actiongpt.github.io}

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge