Action Classification and Highlighting in Videos

Paper and Code

Aug 31, 2017

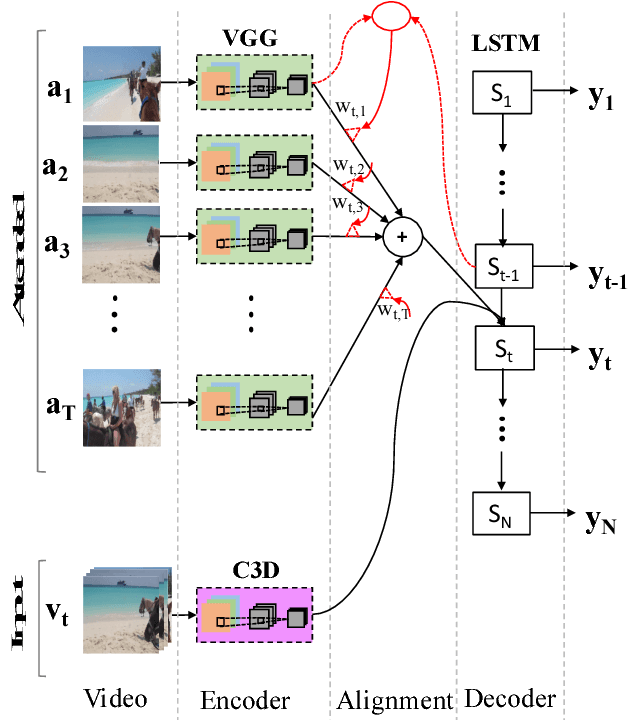

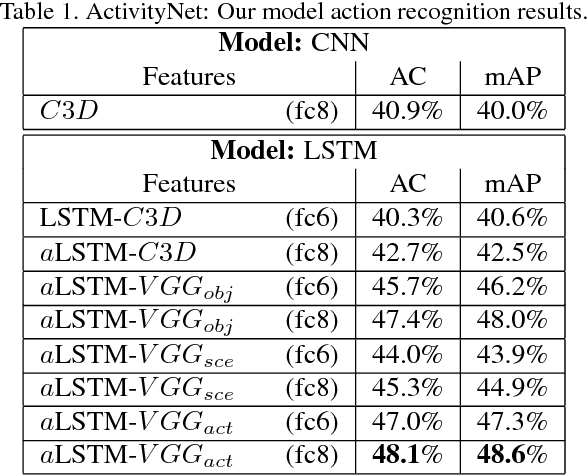

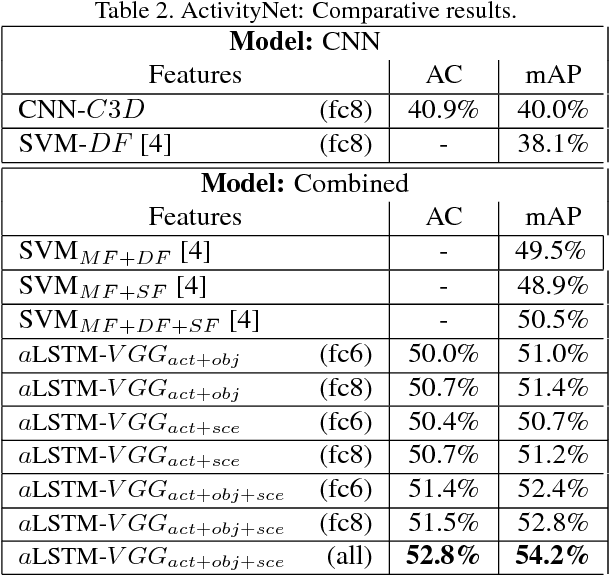

Inspired by recent advances in neural machine translation, that jointly align and translate using encoder-decoder networks equipped with attention, we propose an attentionbased LSTM model for human activity recognition. Our model jointly learns to classify actions and highlight frames associated with the action, by attending to salient visual information through a jointly learned soft-attention networks. We explore attention informed by various forms of visual semantic features, including those encoding actions, objects and scenes. We qualitatively show that soft-attention can learn to effectively attend to important objects and scene information correlated with specific human actions. Further, we show that, quantitatively, our attention-based LSTM outperforms the vanilla LSTM and CNN models used by stateof-the-art methods. On a large-scale youtube video dataset, ActivityNet, our model outperforms competing methods in action classification.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge