A super-polynomial lower bound for learning nonparametric mixtures

Paper and Code

Mar 28, 2022

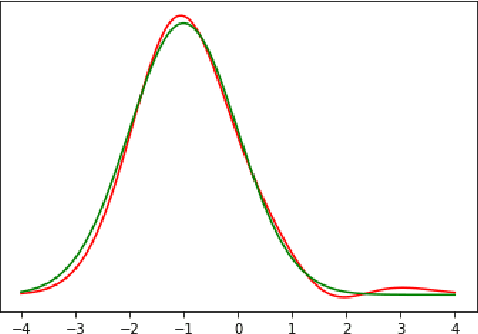

We study the problem of learning nonparametric distributions in a finite mixture, and establish a super-polynomial lower bound on the sample complexity of learning the component distributions in such models. Namely, we are given i.i.d. samples from $f$ where $$ f=\sum_{i=1}^k w_i f_i, \quad\sum_{i=1}^k w_i=1, \quad w_i>0 $$ and we are interested in learning each component $f_i$. Without any assumptions on $f_i$, this problem is ill-posed. In order to identify the components $f_i$, we assume that each $f_i$ can be written as a convolution of a Gaussian and a compactly supported density $\nu_i$ with $\text{supp}(\nu_i)\cap \text{supp}(\nu_j)=\emptyset$. Our main result shows that $\Omega((\frac{1}{\varepsilon})^{C\log\log \frac{1}{\varepsilon}})$ samples are required for estimating each $f_i$. The proof relies on a fast rate for approximation with Gaussians, which may be of independent interest. This result has important implications for the hardness of learning more general nonparametric latent variable models that arise in machine learning applications.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge