A Self-Penalizing Objective Function for Scalable Interaction Detection

Paper and Code

Dec 13, 2020

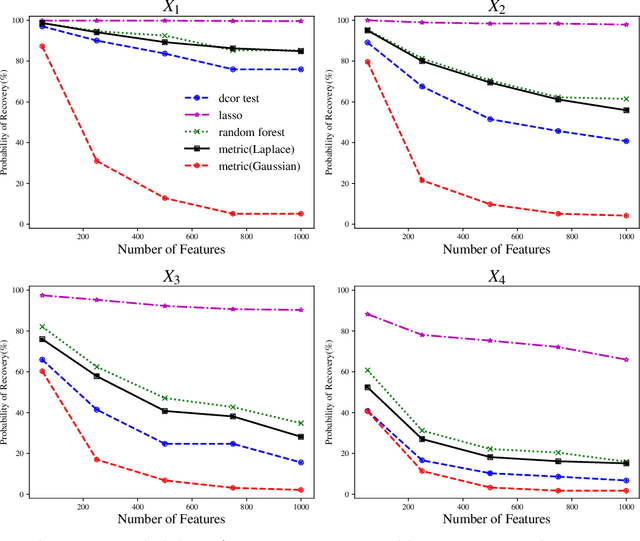

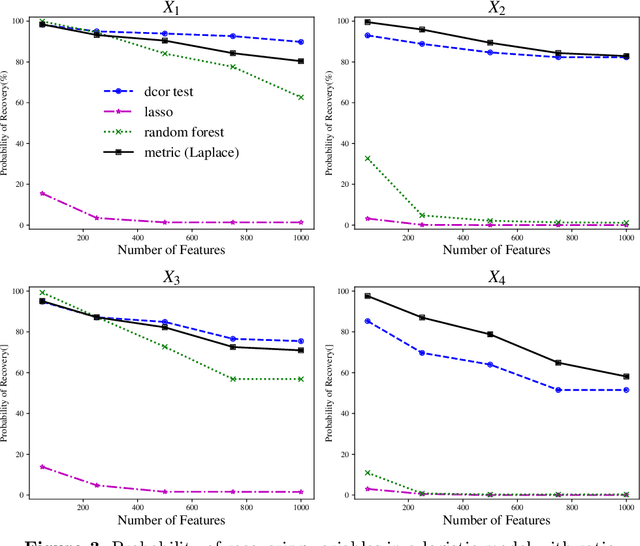

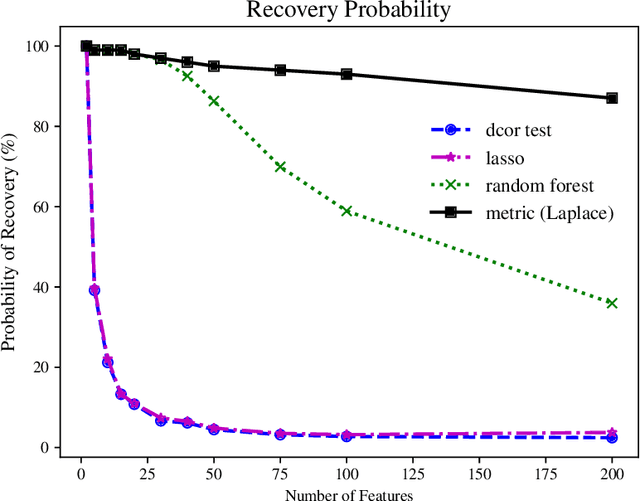

We tackle the problem of nonparametric variable selection with a focus on discovering interactions between variables. With $p$ variables there are $O(p^s)$ possible order-$s$ interactions making exhaustive search infeasible. It is nonetheless possible to identify the variables involved in interactions with only linear computation cost, $O(p)$. The trick is to maximize a class of parametrized nonparametric dependence measures which we call metric learning objectives; the landscape of these nonconvex objective functions is sensitive to interactions but the objectives themselves do not explicitly model interactions. Three properties make metric learning objectives highly attractive: (a) The stationary points of the objective are automatically sparse (i.e. performs selection) -- no explicit $\ell_1$ penalization is needed. (b) All stationary points of the objective exclude noise variables with high probability. (c) Guaranteed recovery of all signal variables without needing to reach the objective's global maxima or special stationary points. The second and third properties mean that all our theoretical results apply in the practical case where one uses gradient ascent to maximize the metric learning objective. While not all metric learning objectives enjoy good statistical power, we design an objective based on $\ell_1$ kernels that does exhibit favorable power: it recovers (i) main effects with $n \sim \log p$ samples, (ii) hierarchical interactions with $n \sim \log p$ samples and (iii) order-$s$ pure interactions with $n \sim p^{2(s-1)}\log p$ samples.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge