A Novel Chaos Theory Inspired Neuronal Architecture

Paper and Code

May 19, 2019

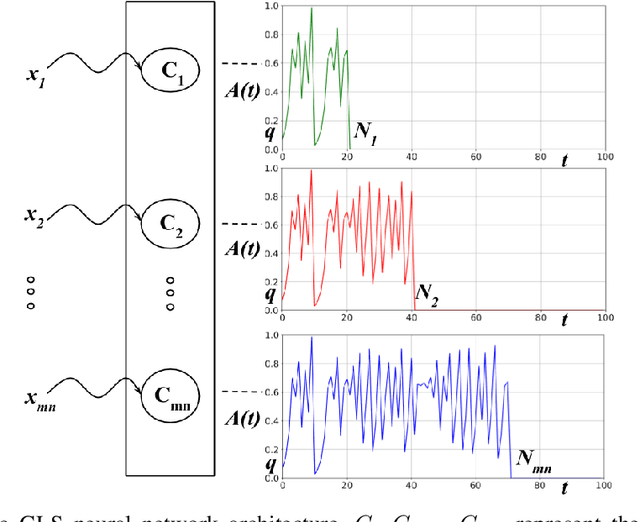

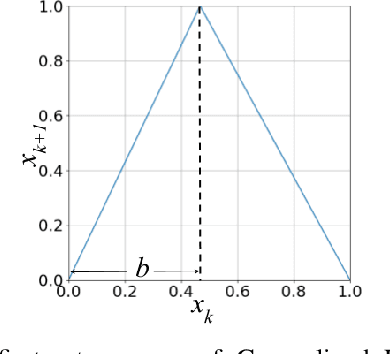

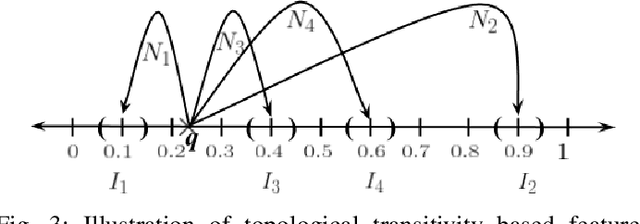

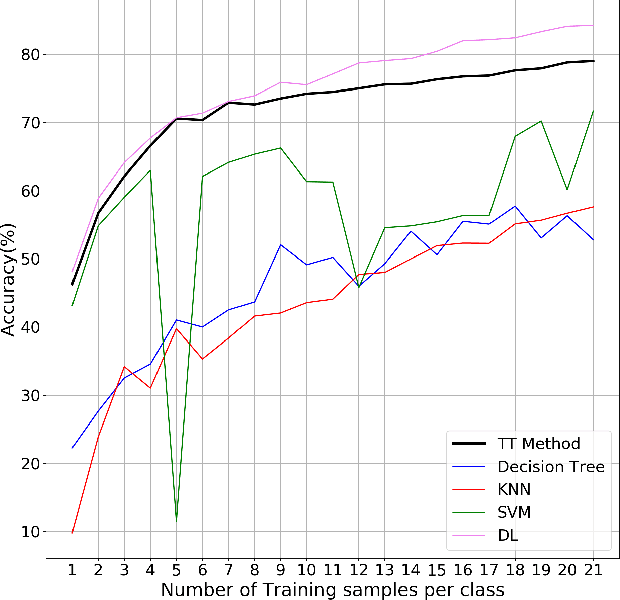

The practical success of widely used machine learning (ML) and deep learning (DL) algorithms in Artificial Intelligence (AI) community owes to availability of large datasets for training and huge computational resources. Despite the enormous practical success of AI, these algorithms are only loosely inspired from the biological brain and do not mimic any of the fundamental properties of neurons in the brain, one such property being the chaotic firing of biological neurons. This motivates us to develop a novel neuronal architecture where the individual neurons are intrinsically chaotic in nature. By making use of the topological transitivity property of chaos, our neuronal network is able to perform classification tasks with very less number of training samples. For the MNIST dataset, with as low as $0.1 \%$ of the total training data, our method outperforms ML and matches DL in classification accuracy for up to $7$ training samples/class. For the Iris dataset, our accuracy is comparable with ML algorithms, and even with just two training samples/class, we report an accuracy as high as $95.8 \%$. This work highlights the effectiveness of chaos and its properties for learning and paves the way for chaos-inspired neuronal architectures by closely mimicking the chaotic nature of neurons in the brain.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge