A Natural Extension To Online Algorithms For Hybrid RL With Limited Coverage

Paper and Code

Mar 07, 2024

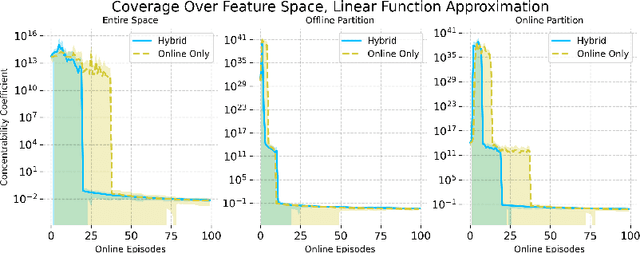

Hybrid Reinforcement Learning (RL), leveraging both online and offline data, has garnered recent interest, yet research on its provable benefits remains sparse. Additionally, many existing hybrid RL algorithms (Song et al., 2023; Nakamoto et al., 2023; Amortila et al., 2024) impose coverage assumptions on the offline dataset, but we show that this is unnecessary. A well-designed online algorithm should "fill in the gaps" in the offline dataset, exploring states and actions that the behavior policy did not explore. Unlike previous approaches that focus on estimating the offline data distribution to guide online exploration (Li et al., 2023b), we show that a natural extension to standard optimistic online algorithms -- warm-starting them by including the offline dataset in the experience replay buffer -- achieves similar provable gains from hybrid data even when the offline dataset does not have single-policy concentrability. We accomplish this by partitioning the state-action space into two, bounding the regret on each partition through an offline and an online complexity measure, and showing that the regret of this hybrid RL algorithm can be characterized by the best partition -- despite the algorithm not knowing the partition itself. As an example, we propose DISC-GOLF, a modification of an existing optimistic online algorithm with general function approximation called GOLF used in Jin et al. (2021); Xie et al. (2022a), and show that it demonstrates provable gains over both online-only and offline-only reinforcement learning, with competitive bounds when specialized to the tabular, linear and block MDP cases. Numerical simulations further validate our theory that hybrid data facilitates more efficient exploration, supporting the potential of hybrid RL in various scenarios.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge