A Multiscale Environment for Learning by Diffusion

Paper and Code

Jan 31, 2021

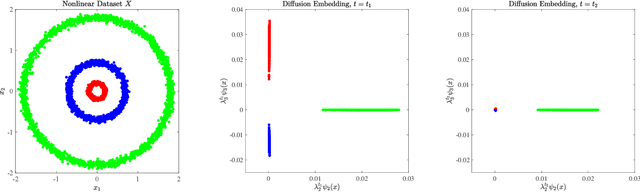

Clustering algorithms partition a dataset into groups of similar points. The clustering problem is very general, and different partitions of the same dataset could be considered correct and useful. To fully understand such data, it must be considered at a variety of scales, ranging from coarse to fine. We introduce the Multiscale Environment for Learning by Diffusion (MELD) data model, which is a family of clusterings parameterized by nonlinear diffusion on the dataset. We show that the MELD data model precisely captures latent multiscale structure in data and facilitates its analysis. To efficiently learn the multiscale structure observed in many real datasets, we introduce the Multiscale Learning by Unsupervised Nonlinear Diffusion (M-LUND) clustering algorithm, which is derived from a diffusion process at a range of temporal scales. We provide theoretical guarantees for the algorithm's performance and establish its computational efficiency. Finally, we show that the M-LUND clustering algorithm detects the latent structure in a range of synthetic and real datasets.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge