A Multimodal Late Fusion Model for E-Commerce Product Classification

Paper and Code

Aug 14, 2020

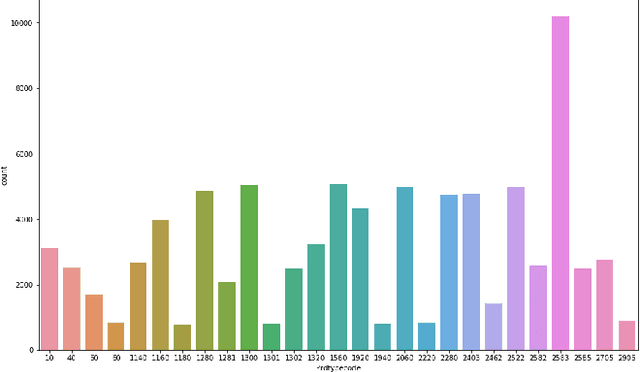

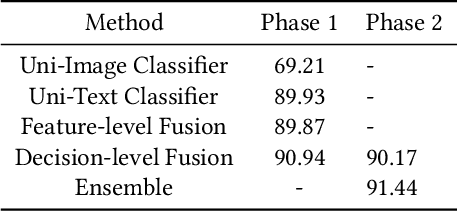

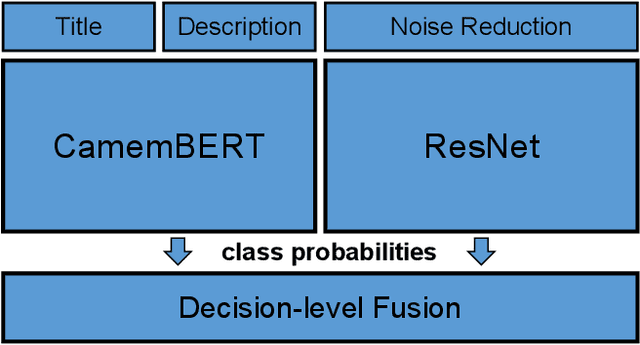

The cataloging of product listings is a fundamental problem for most e-commerce platforms. Despite promising results obtained by unimodal-based methods, it can be expected that their performance can be further boosted by the consideration of multimodal product information. In this study, we investigated a multimodal late fusion approach based on text and image modalities to categorize e-commerce products on Rakuten. Specifically, we developed modal specific state-of-the-art deep neural networks for each input modal, and then fused them at the decision level. Experimental results on Multimodal Product Classification Task of SIGIR 2020 E-Commerce Workshop Data Challenge demonstrate the superiority and effectiveness of our proposed method compared with unimodal and other multimodal methods. Our team named pa_curis won the 1st place with a macro-F1 of 0.9144 on the final leaderboard.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge