A language model based approach towards large scale and lightweight language identification systems

Paper and Code

Oct 13, 2015

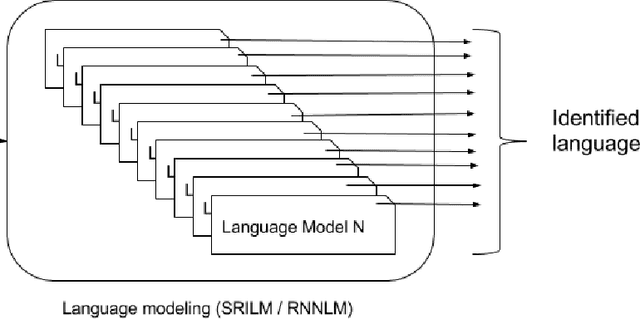

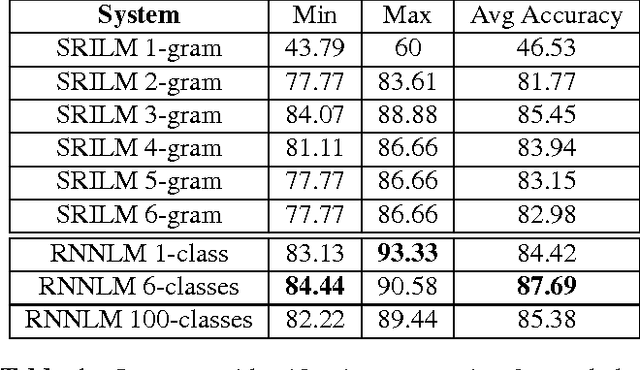

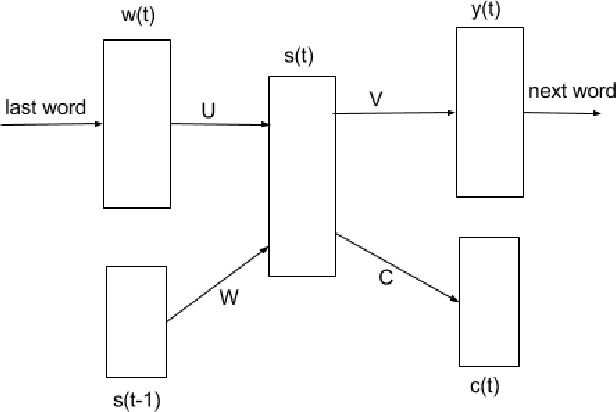

Multilingual spoken dialogue systems have gained prominence in the recent past necessitating the requirement for a front-end Language Identification (LID) system. Most of the existing LID systems rely on modeling the language discriminative information from low-level acoustic features. Due to the variabilities of speech (speaker and emotional variabilities, etc.), large-scale LID systems developed using low-level acoustic features suffer from a degradation in the performance. In this approach, we have attempted to model the higher level language discriminative phonotactic information for developing an LID system. In this paper, the input speech signal is tokenized to phone sequences by using a language independent phone recognizer. The language discriminative phonotactic information in the obtained phone sequences are modeled using statistical and recurrent neural network based language modeling approaches. As this approach, relies on higher level phonotactical information it is more robust to variabilities of speech. Proposed approach is computationally light weight, highly scalable and it can be used in complement with the existing LID systems.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge