A Generative Symbolic Model for More General Natural Language Understanding and Reasoning

Paper and Code

May 06, 2021

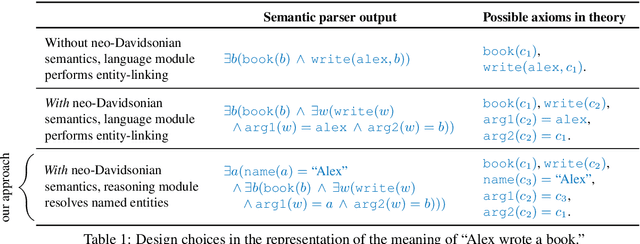

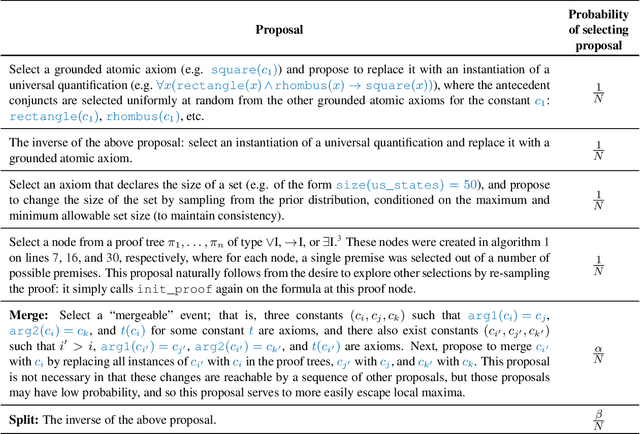

We present a new fully-symbolic Bayesian model of semantic parsing and reasoning which we hope to be the first step in a research program toward more domain- and task-general NLU and AI. Humans create internal mental models of their observations which greatly aid in their ability to understand and reason about a large variety of problems. We aim to capture this in our model, which is fully interpretable and Bayesian, designed specifically with generality in mind, and therefore provides a clearer path for future research to expand its capabilities. We derive and implement an inference algorithm, and evaluate it on an out-of-domain ProofWriter question-answering/reasoning task, achieving zero-shot accuracies of 100% and 93.43%, depending on the experimental setting, thereby demonstrating its value as a proof-of-concept.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge