A generalized stacked reinforcement learning method for sampled systems

Paper and Code

Aug 23, 2021

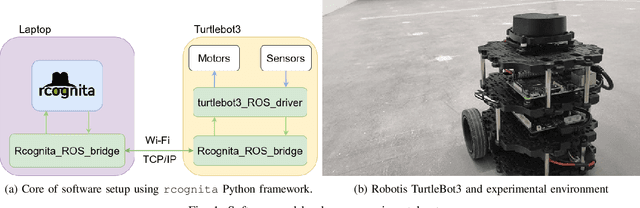

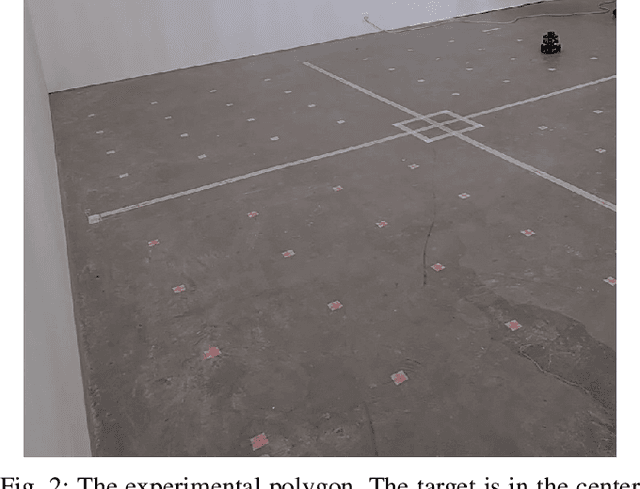

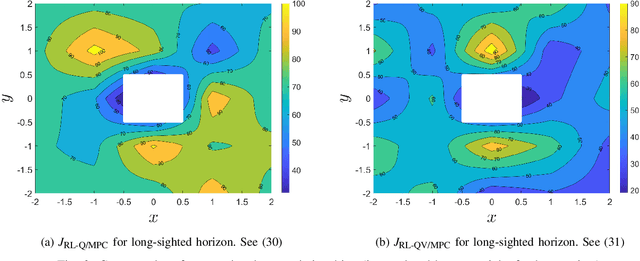

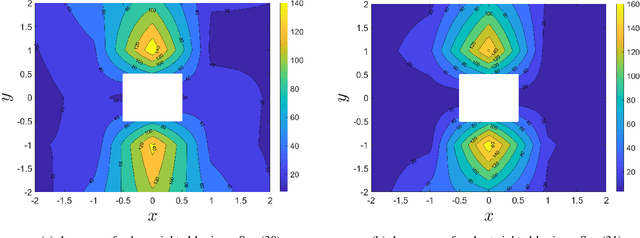

A common setting of reinforcement learning (RL) is a Markov decision process (MDP) in which the environment is a stochastic discrete-time dynamical system. Whereas MDPs are suitable in such applications as video-games or puzzles, physical systems are time-continuous. Continuous methods of RL are known, but they have their limitations, such as, e.g., collapse of Q-learning. A general variant of RL is of digital format, where updates of the value and policy are performed at discrete moments in time. The agent-environment loop then amounts to a sampled system, whereby sample-and-hold is a specific case. In this paper, we propose and benchmark two RL methods suitable for sampled systems. Specifically, we hybridize model-predictive control (MPC) with critics learning the Q- and value function. Optimality is analyzed and performance comparison is done in an experimental case study with a mobile robot.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge