A Fast and Scalable Pathwise-Solver for Group Lasso and Elastic Net Penalized Regression via Block-Coordinate Descent

Paper and Code

May 14, 2024

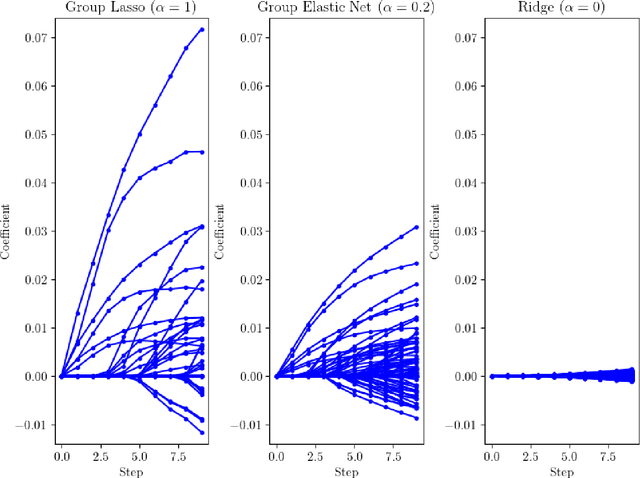

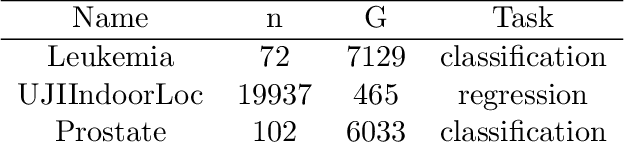

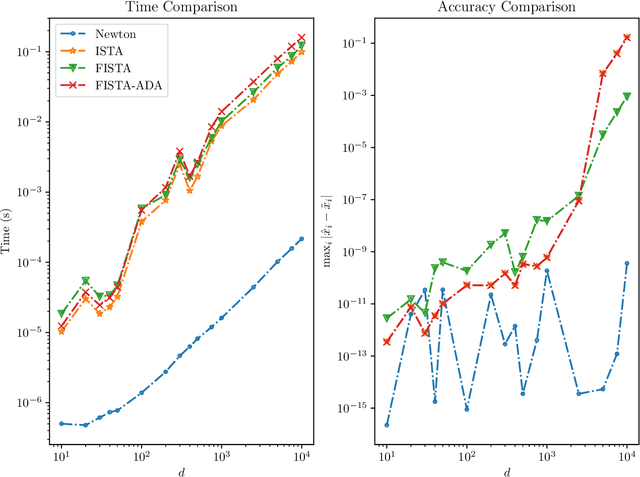

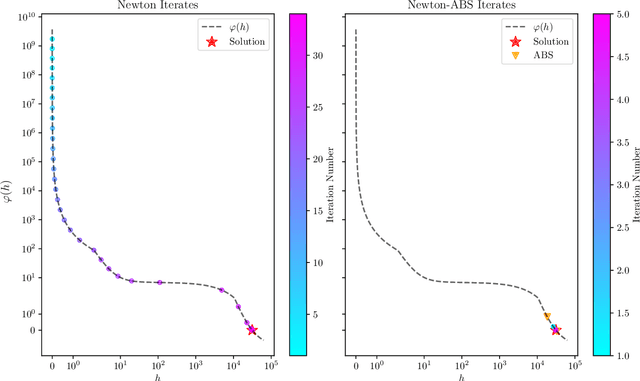

We develop fast and scalable algorithms based on block-coordinate descent to solve the group lasso and the group elastic net for generalized linear models along a regularization path. Special attention is given when the loss is the usual least squares loss (Gaussian loss). We show that each block-coordinate update can be solved efficiently using Newton's method and further improved using an adaptive bisection method, solving these updates with a quadratic convergence rate. Our benchmarks show that our package adelie performs 3 to 10 times faster than the next fastest package on a wide array of both simulated and real datasets. Moreover, we demonstrate that our package is a competitive lasso solver as well, matching the performance of the popular lasso package glmnet.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge