A Dynamic-Adversarial Mining Approach to the Security of Machine Learning

Paper and Code

Mar 24, 2018

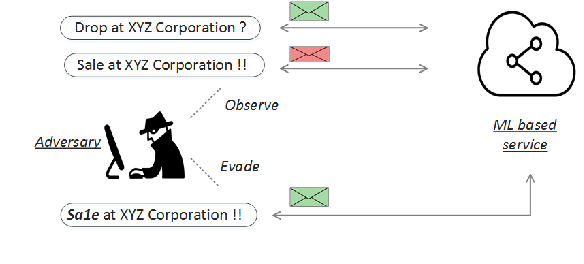

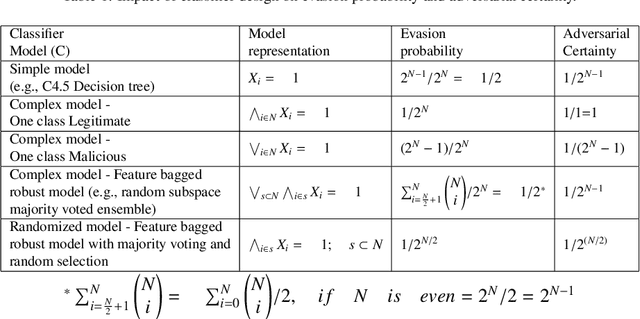

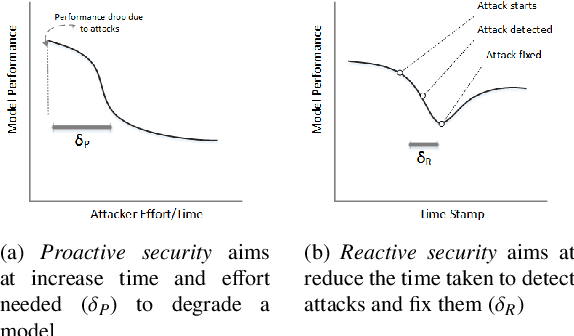

Operating in a dynamic real world environment requires a forward thinking and adversarial aware design for classifiers, beyond fitting the model to the training data. In such scenarios, it is necessary to make classifiers - a) harder to evade, b) easier to detect changes in the data distribution over time, and c) be able to retrain and recover from model degradation. While most works in the security of machine learning has concentrated on the evasion resistance (a) problem, there is little work in the areas of reacting to attacks (b and c). Additionally, while streaming data research concentrates on the ability to react to changes to the data distribution, they often take an adversarial agnostic view of the security problem. This makes them vulnerable to adversarial activity, which is aimed towards evading the concept drift detection mechanism itself. In this paper, we analyze the security of machine learning, from a dynamic and adversarial aware perspective. The existing techniques of Restrictive one class classifier models, Complex learning models and Randomization based ensembles, are shown to be myopic as they approach security as a static task. These methodologies are ill suited for a dynamic environment, as they leak excessive information to an adversary, who can subsequently launch attacks which are indistinguishable from the benign data. Based on empirical vulnerability analysis against a sophisticated adversary, a novel feature importance hiding approach for classifier design, is proposed. The proposed design ensures that future attacks on classifiers can be detected and recovered from. The proposed work presents motivation, by serving as a blueprint, for future work in the area of Dynamic-Adversarial mining, which combines lessons learned from Streaming data mining, Adversarial learning and Cybersecurity.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge