A design of human-like robust AI machines in object identification

Paper and Code

Jan 07, 2021

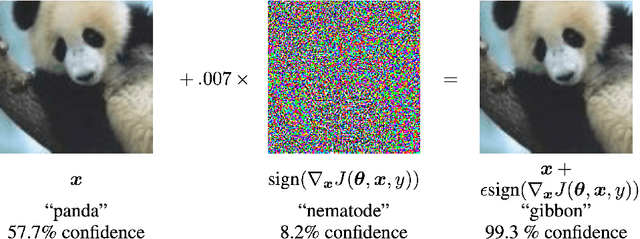

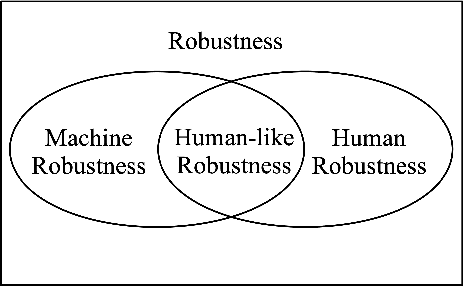

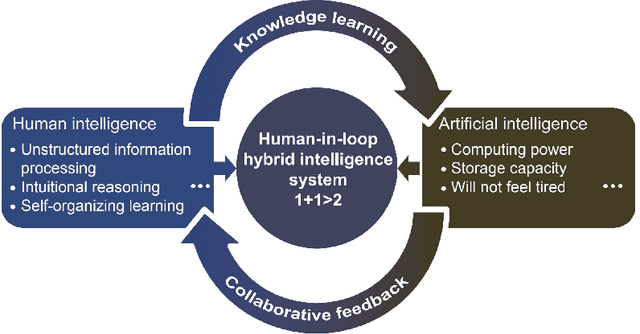

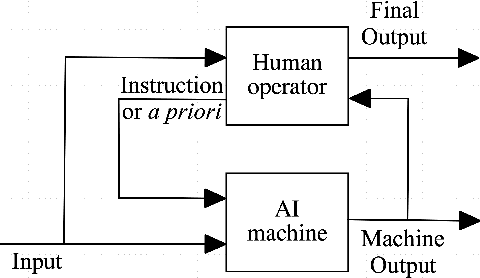

This is a perspective paper inspired from the study of Turing Test proposed by A.M. Turing (23 June 1912 - 7 June 1954) in 1950. Following one important implication of Turing Test for enabling a machine with a human-like behavior or performance, we define human-like robustness (HLR) for AI machines. The objective of the new definition aims to enforce AI machines with HLR, including to evaluate them in terms of HLR. A specific task is discussed only on object identification, because it is the most common task for every person in daily life. Similar to the perspective, or design, position by Turing, we provide a solution of how to achieve HLR AI machines without constructing them and conducting real experiments. The solution should consists of three important features in the machines. The first feature of HLR machines is to utilize common sense from humans for realizing a causal inference. The second feature is to make a decision from a semantic space for having interpretations to the decision. The third feature is to include a "human-in-the-loop" setting for advancing HLR machines. We show an "identification game" using proposed design of HLR machines. The present paper shows an attempt to learn and explore further from Turing Test towards the design of human-like AI machines.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge