A Deep Learning Approach to Analyzing Continuous-Time Systems

Paper and Code

Sep 25, 2022

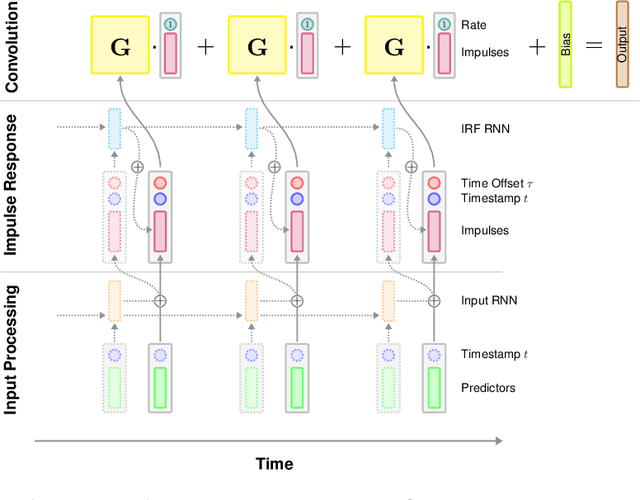

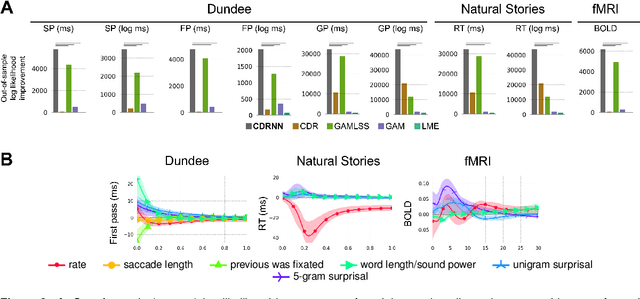

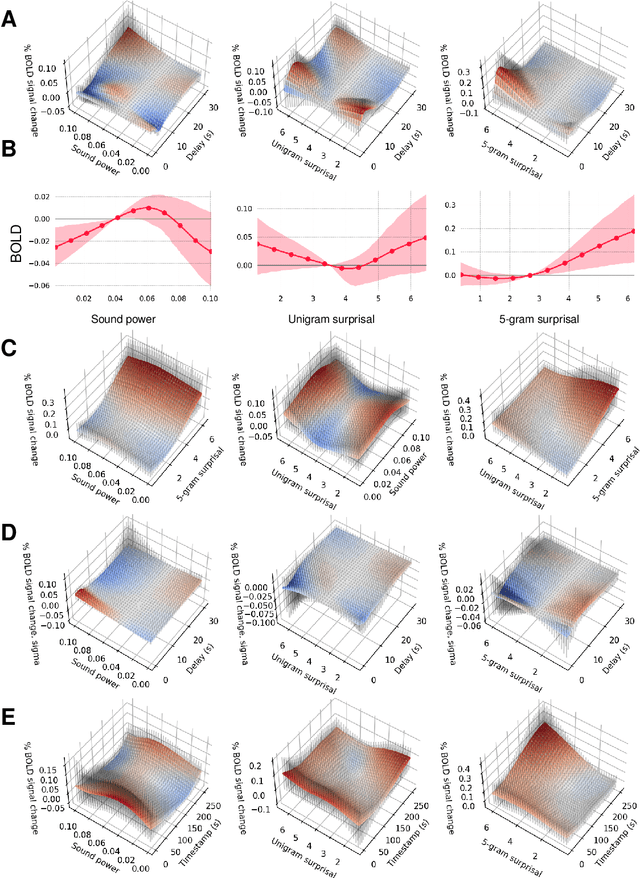

Scientists often use observational time series data to study complex natural processes, from climate change to civil conflict to brain activity. But regression analyses of these data often assume simplistic dynamics. Recent advances in deep learning have yielded startling improvements to the performance of models of complex processes, from speech comprehension to nuclear physics to competitive gaming. But deep learning is generally not used for scientific analysis. Here, we bridge this gap by showing that deep learning can be used, not just to imitate, but to analyze complex processes, providing flexible function approximation while preserving interpretability. Our approach -- the continuous-time deconvolutional regressive neural network (CDRNN) -- relaxes standard simplifying assumptions (e.g., linearity, stationarity, and homoscedasticity) that are implausible for many natural systems and may critically affect the interpretation of data. We evaluate CDRNNs on incremental human language processing, a domain with complex continuous dynamics. We demonstrate dramatic improvements to predictive likelihood in behavioral and neuroimaging data, and we show that CDRNNs enable flexible discovery of novel patterns in exploratory analyses, provide robust control of possible confounds in confirmatory analyses, and open up research questions that are otherwise hard to study using observational data.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge