A Contrastive Learning Based Convolutional Neural Network for ERP Brain-Computer Interfaces

Paper and Code

Jul 02, 2024

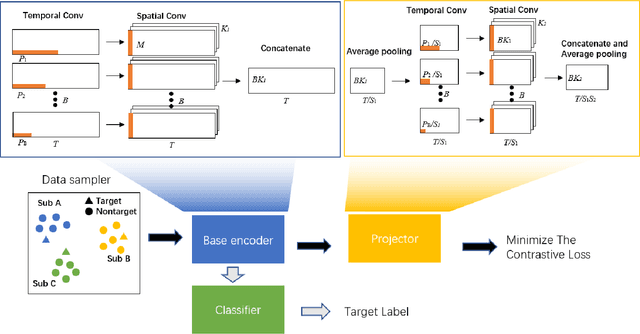

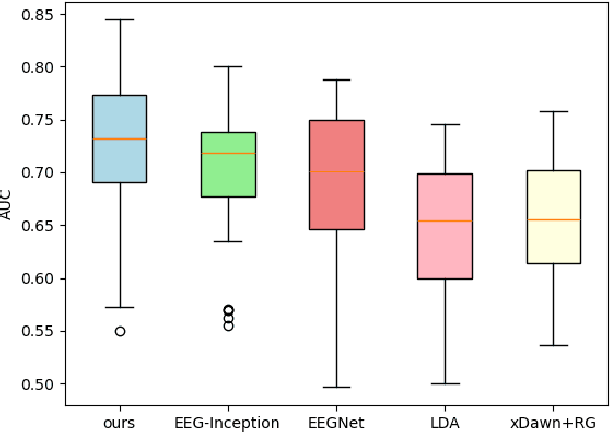

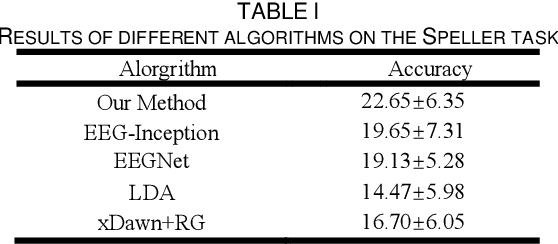

ERP-based EEG detection is gaining increasing attention in the field of brain-computer interfaces. However, due to the complexity of ERP signal components, their low signal-to-noise ratio, and significant inter-subject variability, cross-subject ERP signal detection has been challenging. The continuous advancement in deep learning has greatly contributed to addressing this issue. This brief proposes a contrastive learning training framework and an Inception module to extract multi-scale temporal and spatial features, representing the subject-invariant components of ERP signals. Specifically, a base encoder integrated with a linear Inception module and a nonlinear projector is used to project the raw data into latent space. By maximizing signal similarity under different targets, the inter-subject EEG signal differences in latent space are minimized. The extracted spatiotemporal features are then used for ERP target detection. The proposed algorithm achieved the best AUC performance in single-trial binary classification tasks on the P300 dataset and showed significant optimization in speller decoding tasks compared to existing algorithms.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge