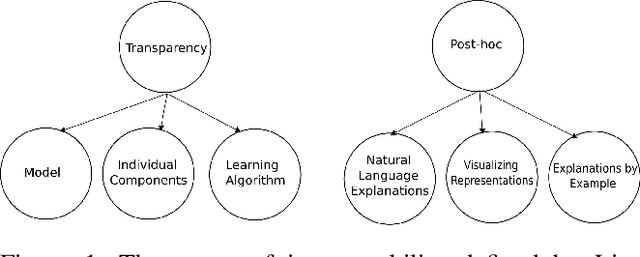

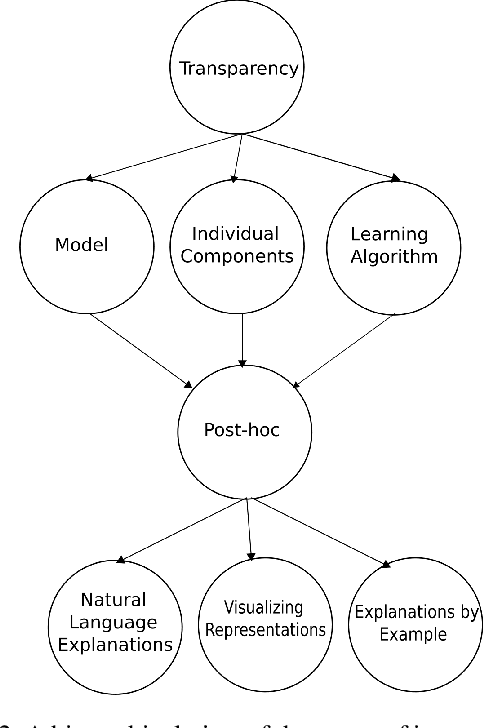

A Categorisation of Post-hoc Explanations for Predictive Models

Paper and Code

Apr 04, 2019

The ubiquity of machine learning based predictive models in modern society naturally leads people to ask how trustworthy those models are? In predictive modeling, it is quite common to induce a trade-off between accuracy and interpretability. For instance, doctors would like to know how effective some treatment will be for a patient or why the model suggested a particular medication for a patient exhibiting those symptoms? We acknowledge that the necessity for interpretability is a consequence of an incomplete formalisation of the problem, or more precisely of multiple meanings adhered to a particular concept. For certain problems, it is not enough to get the answer (what), the model also has to provide an explanation of how it came to that conclusion (why), because a correct prediction, only partially solves the original problem. In this article we extend existing categorisation of techniques to aid model interpretability and test this categorisation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge