Zekun Long

WS-Net: Weak-Signal Representation Learning and Gated Abundance Reconstruction for Hyperspectral Unmixing via State-Space and Weak Signal Attention Fusion

Mar 10, 2026Abstract:Weak spectral responses in hyperspectral images are often obscured by dominant endmembers and sensor noise, resulting in inaccurate abundance estimation. This paper introduces WS-Net, a deep unmixing framework specifically designed to address weak-signal collapse through state-space modelling and Weak Signal Attention fusion. The network features a multi-resolution wavelet-fused encoder that captures both high-frequency discontinuities and smooth spectral variations with a hybrid backbone that integrates a Mamba state-space branch for efficient long-range dependency modelling. It also incorporates a Weak Signal Attention branch that selectively enhances low-similarity spectral cues. A learnable gating mechanism adaptively fuses both representations, while the decoder leverages KL-divergence-based regularisation to enforce separability between dominant and weak endmembers. Experiments on one simulated and two real datasets (synthetic dataset, Samson, and Apex) demonstrate consistent improvements over six state-of-the-art baselines, achieving up to 55% and 63% reductions in RMSE and SAD, respectively. The framework maintains stable accuracy under low-SNR conditions, particularly for weak endmembers, establishing WS-Net as a robust and computationally efficient benchmark for weak-signal hyperspectral unmixing.

HSLiNets: Hyperspectral Image and LiDAR Data Fusion Using Efficient Dual Non-Linear Feature Learning Networks

Dec 03, 2024

Abstract:The integration of hyperspectral imaging (HSI) and LiDAR data within new linear feature spaces offers a promising solution to the challenges posed by the high-dimensionality and redundancy inherent in HSIs. This study introduces a dual linear fused space framework that capitalizes on bidirectional reversed convolutional neural network (CNN) pathways, coupled with a specialized spatial analysis block. This approach combines the computational efficiency of CNNs with the adaptability of attention mechanisms, facilitating the effective fusion of spectral and spatial information. The proposed method not only enhances data processing and classification accuracy, but also mitigates the computational burden typically associated with advanced models such as Transformers. Evaluations of the Houston 2013 dataset demonstrate that our approach surpasses existing state-of-the-art models. This advancement underscores the potential of the framework in resource-constrained environments and its significant contributions to the field of remote sensing.

Hyperspectral Images Efficient Spatial and Spectral non-Linear Model with Bidirectional Feature Learning

Dec 03, 2024

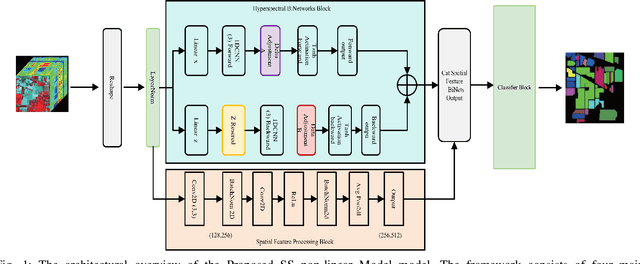

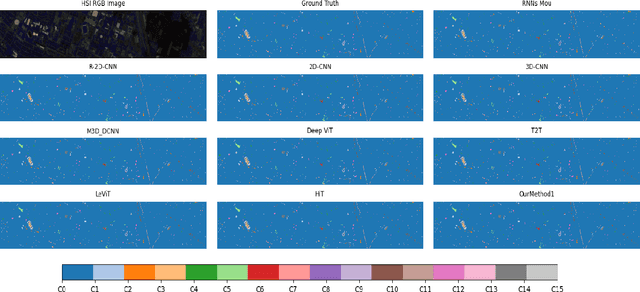

Abstract:Classifying hyperspectral images (HSIs) is a complex task in remote sensing due to the high-dimensional nature and volume of data involved. To address these challenges, we propose the Spectral-Spatial non-Linear Model, a novel framework that significantly reduces data volume while enhancing classification accuracy. Our model employs a bidirectional reversed convolutional neural network (CNN) to efficiently extract spectral features, complemented by a specialized block for spatial feature analysis. This hybrid approach leverages the operational efficiency of CNNs and incorporates dynamic feature extraction inspired by attention mechanisms, optimizing performance without the high computational demands typically associated with transformer-based models. The SS non-Linear Model is designed to process hyperspectral data bidirectionally, achieving notable classification and efficiency improvements by fusing spectral and spatial features effectively. This approach yields superior classification accuracy compared to existing benchmarks while maintaining computational efficiency, making it suitable for resource-constrained environments. We validate the SS non-Linear Model on three widely recognized datasets, Houston 2013, Indian Pines, and Pavia University, demonstrating its ability to outperform current state-of-the-art models in HSI classification and efficiency. This work highlights the innovative methodology of the SS non-Linear Model and its practical benefits for remote sensing applications, where both data efficiency and classification accuracy are critical. For further details, please refer to our code repository on GitHub: HSILinearModel.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge