Yuxin Jing

Selective Variable Convolution Meets Dynamic Content Guided Attention for Infrared Small Target Detection

Apr 30, 2025

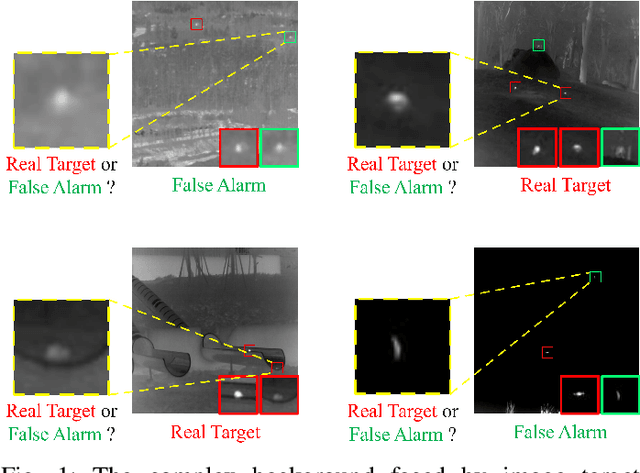

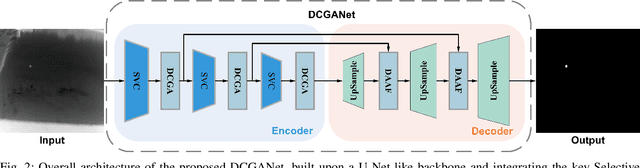

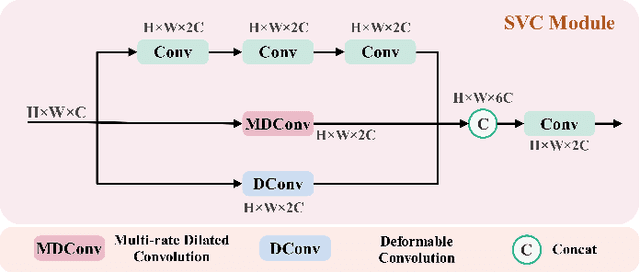

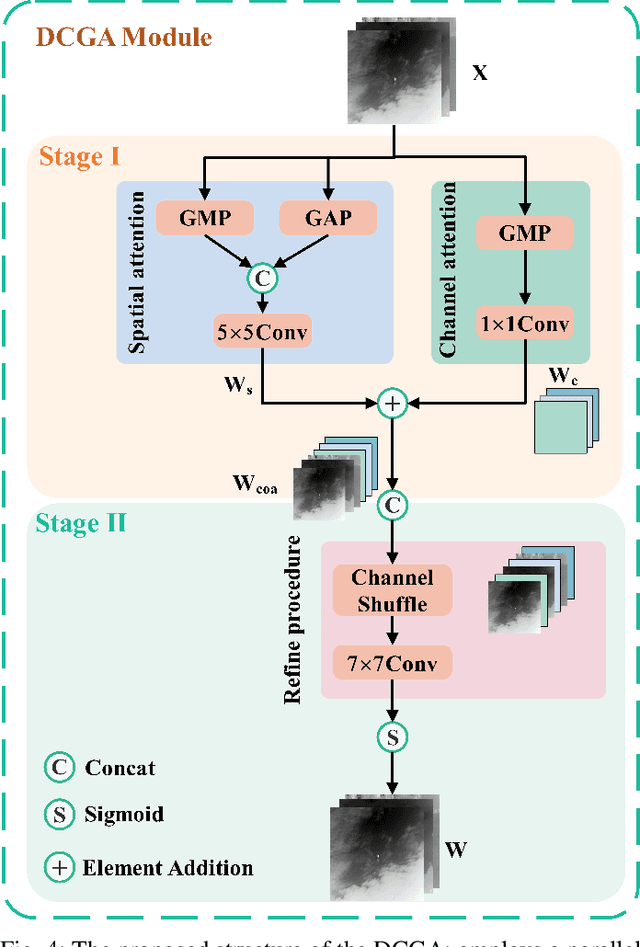

Abstract:Infrared Small Target Detection (IRSTD) system aims to identify small targets in complex backgrounds. Due to the convolution operation in Convolutional Neural Networks (CNNs), applying traditional CNNs to IRSTD presents challenges, since the feature extraction of small targets is often insufficient, resulting in the loss of critical features. To address these issues, we propose a dynamic content guided attention multiscale feature aggregation network (DCGANet), which adheres to the attention principle of 'coarse-to-fine' and achieves high detection accuracy. First, we propose a selective variable convolution (SVC) module that integrates the benefits of standard convolution, irregular deformable convolution, and multi-rate dilated convolution. This module is designed to expand the receptive field and enhance non-local features, thereby effectively improving the discrimination of targets from backgrounds. Second, the core component of DCGANet is a two-stage content guided attention module. This module employs two-stage attention mechanism to initially direct the network's focus to salient regions within the feature maps and subsequently determine whether these regions correspond to targets or background interference. By retaining the most significant responses, this mechanism effectively suppresses false alarms. Additionally, we propose adaptive dynamic feature fusion (ADFF) module to substitute for static feature cascading. This dynamic feature fusion strategy enables DCGANet to adaptively integrate contextual features, thereby enhancing its ability to discriminate true targets from false alarms. DCGANet has achieved new benchmarks across multiple datasets.

Make Both Ends Meet: A Synergistic Optimization Infrared Small Target Detection with Streamlined Computational Overhead

Apr 30, 2025

Abstract:Infrared small target detection(IRSTD) is widely recognized as a challenging task due to the inherent limitations of infrared imaging, including low signal-to-noise ratios, lack of texture details, and complex background interference. While most existing methods model IRSTD as a semantic segmentation task, but they suffer from two critical drawbacks: (1)blurred target boundaries caused by long-distance imaging dispersion; and (2) excessive computational overhead due to indiscriminate feature stackin. To address these issues, we propose the Lightweight Efficiency Infrared Small Target Detection (LE-IRSTD), a lightweight and efficient framework based on YOLOv8n, with following key innovations. Firstly, we identify that the multiple bottleneck structures within the C2f component of the YOLOv8-n backbone contribute to an increased computational burden. Therefore, we implement the Mobile Inverted Bottleneck Convolution block (MBConvblock) and Bottleneck Structure block (BSblock) in the backbone, effectively balancing the trade-off between computational efficiency and the extraction of deep semantic information. Secondly, we introduce the Attention-based Variable Convolution Stem (AVCStem) structure, substituting the final convolution with Variable Kernel Convolution (VKConv), which allows for adaptive convolutional kernels that can transform into various shapes, facilitating the receptive field for the extraction of targets. Finally, we employ Global Shuffle Convolution (GSConv) to shuffle the channel dimension features obtained from different convolutional approaches, thereby enhancing the robustness and generalization capabilities of our method. Experimental results demonstrate that our LE-IRSTD method achieves compelling results in both accuracy and lightweight performance, outperforming several state-of-the-art deep learning methods.

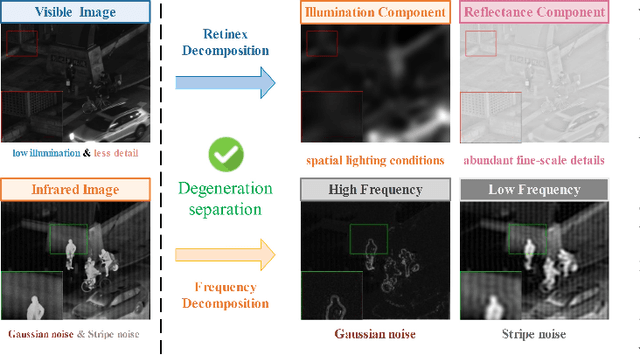

DAAF:Degradation-Aware Adaptive Fusion Framework for Robust Infrared and Visible Images Fusion

Apr 15, 2025

Abstract:Existing infrared and visible image fusion(IVIF) algorithms often prioritize high-quality images, neglecting image degradation such as low light and noise, which limits the practical potential. This paper propose Degradation-Aware Adaptive image Fusion (DAAF), which achieves unified modeling of adaptive degradation optimization and image fusion. Specifically, DAAF comprises an auxiliary Adaptive Degradation Optimization Network (ADON) and a Feature Interactive Local-Global Fusion (FILGF) Network. Firstly, ADON includes infrared and visible-light branches. Within the infrared branch, frequency-domain feature decomposition and extraction are employed to isolate Gaussian and stripe noise. In the visible-light branch, Retinex decomposition is applied to extract illumination and reflectance components, enabling complementary enhancement of detail and illumination distribution. Subsequently, FILGF performs interactive multi-scale local-global feature fusion. Local feature fusion consists of intra-inter model feature complement, while global feature fusion is achieved through a interactive cross-model attention. Extensive experiments have shown that DAAF outperforms current IVIF algorithms in normal and complex degradation scenarios.

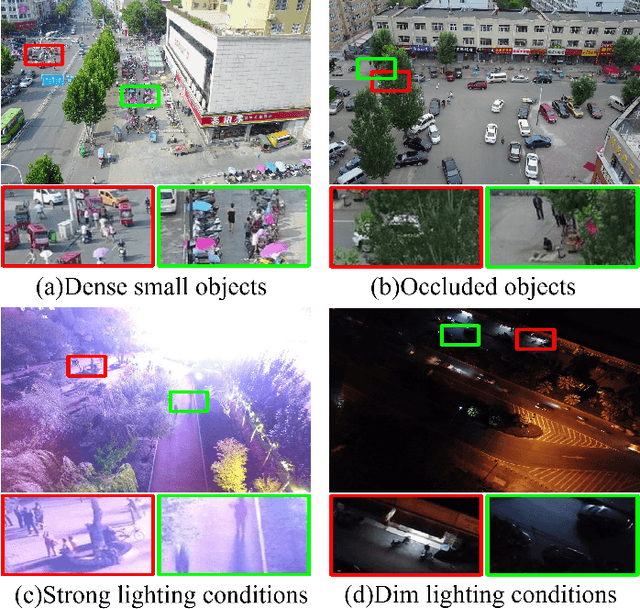

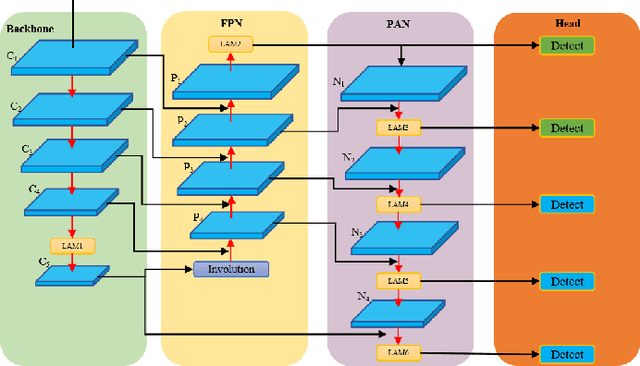

LAM-YOLO: Drones-based Small Object Detection on Lighting-Occlusion Attention Mechanism YOLO

Nov 01, 2024

Abstract:Drone-based target detection presents inherent challenges, such as the high density and overlap of targets in drone-based images, as well as the blurriness of targets under varying lighting conditions, which complicates identification. Traditional methods often struggle to recognize numerous densely packed small targets under complex background. To address these challenges, we propose LAM-YOLO, an object detection model specifically designed for drone-based. First, we introduce a light-occlusion attention mechanism to enhance the visibility of small targets under different lighting conditions. Meanwhile, we incroporate incorporate Involution modules to improve interaction among feature layers. Second, we utilize an improved SIB-IoU as the regression loss function to accelerate model convergence and enhance localization accuracy. Finally, we implement a novel detection strategy that introduces two auxiliary detection heads for identifying smaller-scale targets.Our quantitative results demonstrate that LAM-YOLO outperforms methods such as Faster R-CNN, YOLOv9, and YOLOv10 in terms of mAP@0.5 and mAP@0.5:0.95 on the VisDrone2019 public dataset. Compared to the original YOLOv8, the average precision increases by 7.1\%. Additionally, the proposed SIB-IoU loss function shows improved faster convergence speed during training and improved average precision over the traditional loss function.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge