Yuki Endo

TerraFusion: Joint Generation of Terrain Geometry and Texture Using Latent Diffusion Models

May 07, 2025

Abstract:3D terrain models are essential in fields such as video game development and film production. Since surface color often correlates with terrain geometry, capturing this relationship is crucial to achieving realism. However, most existing methods generate either a heightmap or a texture, without sufficiently accounting for the inherent correlation. In this paper, we propose a method that jointly generates terrain heightmaps and textures using a latent diffusion model. First, we train the model in an unsupervised manner to randomly generate paired heightmaps and textures. Then, we perform supervised learning of an external adapter to enable user control via hand-drawn sketches. Experiments show that our approach allows intuitive terrain generation while preserving the correlation between heightmaps and textures.

Person-In-Situ: Scene-Consistent Human Image Insertion with Occlusion-Aware Pose Control

May 07, 2025Abstract:Compositing human figures into scene images has broad applications in areas such as entertainment and advertising. However, existing methods often cannot handle occlusion of the inserted person by foreground objects and unnaturally place the person in the frontmost layer. Moreover, they offer limited control over the inserted person's pose. To address these challenges, we propose two methods. Both allow explicit pose control via a 3D body model and leverage latent diffusion models to synthesize the person at a contextually appropriate depth, naturally handling occlusions without requiring occlusion masks. The first is a two-stage approach: the model first learns a depth map of the scene with the person through supervised learning, and then synthesizes the person accordingly. The second method learns occlusion implicitly and synthesizes the person directly from input data without explicit depth supervision. Quantitative and qualitative evaluations show that both methods outperform existing approaches by better preserving scene consistency while accurately reflecting occlusions and user-specified poses.

FreeUV: Ground-Truth-Free Realistic Facial UV Texture Recovery via Cross-Assembly Inference Strategy

Mar 21, 2025Abstract:Recovering high-quality 3D facial textures from single-view 2D images is a challenging task, especially under constraints of limited data and complex facial details such as makeup, wrinkles, and occlusions. In this paper, we introduce FreeUV, a novel ground-truth-free UV texture recovery framework that eliminates the need for annotated or synthetic UV data. FreeUV leverages pre-trained stable diffusion model alongside a Cross-Assembly inference strategy to fulfill this objective. In FreeUV, separate networks are trained independently to focus on realistic appearance and structural consistency, and these networks are combined during inference to generate coherent textures. Our approach accurately captures intricate facial features and demonstrates robust performance across diverse poses and occlusions. Extensive experiments validate FreeUV's effectiveness, with results surpassing state-of-the-art methods in both quantitative and qualitative metrics. Additionally, FreeUV enables new applications, including local editing, facial feature interpolation, and multi-view texture recovery. By reducing data requirements, FreeUV offers a scalable solution for generating high-fidelity 3D facial textures suitable for real-world scenarios.

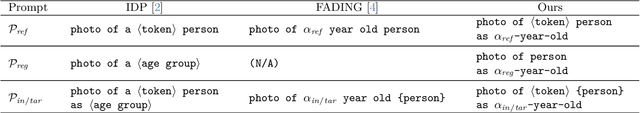

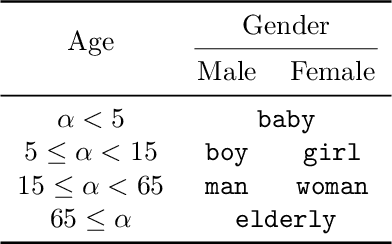

SelfAge: Personalized Facial Age Transformation Using Self-reference Images

Feb 19, 2025

Abstract:Age transformation of facial images is a technique that edits age-related person's appearances while preserving the identity. Existing deep learning-based methods can reproduce natural age transformations; however, they only reproduce averaged transitions and fail to account for individual-specific appearances influenced by their life histories. In this paper, we propose the first diffusion model-based method for personalized age transformation. Our diffusion model takes a facial image and a target age as input and generates an age-edited face image as output. To reflect individual-specific features, we incorporate additional supervision using self-reference images, which are facial images of the same person at different ages. Specifically, we fine-tune a pretrained diffusion model for personalized adaptation using approximately 3 to 5 self-reference images. Additionally, we design an effective prompt to enhance the performance of age editing and identity preservation. Experiments demonstrate that our method achieves superior performance both quantitatively and qualitatively compared to existing methods. The code and the pretrained model are available at https://github.com/shiiiijp/SelfAge.

All-frequency Full-body Human Image Relighting

Nov 01, 2024

Abstract:Relighting of human images enables post-photography editing of lighting effects in portraits. The current mainstream approach uses neural networks to approximate lighting effects without explicitly accounting for the principle of physical shading. As a result, it often has difficulty representing high-frequency shadows and shading. In this paper, we propose a two-stage relighting method that can reproduce physically-based shadows and shading from low to high frequencies. The key idea is to approximate an environment light source with a set of a fixed number of area light sources. The first stage employs supervised inverse rendering from a single image using neural networks and calculates physically-based shading. The second stage then calculates shadow for each area light and sums up to render the final image. We propose to make soft shadow mapping differentiable for the area-light approximation of environment lighting. We demonstrate that our method can plausibly reproduce all-frequency shadows and shading caused by environment illumination, which have been difficult to reproduce using existing methods.

EG-HumanNeRF: Efficient Generalizable Human NeRF Utilizing Human Prior for Sparse View

Oct 16, 2024

Abstract:Generalizable neural radiance field (NeRF) enables neural-based digital human rendering without per-scene retraining. When combined with human prior knowledge, high-quality human rendering can be achieved even with sparse input views. However, the inference of these methods is still slow, as a large number of neural network queries on each ray are required to ensure the rendering quality. Moreover, occluded regions often suffer from artifacts, especially when the input views are sparse. To address these issues, we propose a generalizable human NeRF framework that achieves high-quality and real-time rendering with sparse input views by extensively leveraging human prior knowledge. We accelerate the rendering with a two-stage sampling reduction strategy: first constructing boundary meshes around the human geometry to reduce the number of ray samples for sampling guidance regression, and then volume rendering using fewer guided samples. To improve rendering quality, especially in occluded regions, we propose an occlusion-aware attention mechanism to extract occlusion information from the human priors, followed by an image space refinement network to improve rendering quality. Furthermore, for volume rendering, we adopt a signed ray distance function (SRDF) formulation, which allows us to propose an SRDF loss at every sample position to improve the rendering quality further. Our experiments demonstrate that our method outperforms the state-of-the-art methods in rendering quality and has a competitive rendering speed compared with speed-prioritized novel view synthesis methods.

3D View Optimization for Improving Image Aesthetics

May 26, 2024Abstract:Achieving aesthetically pleasing photography necessitates attention to multiple factors, including composition and capture conditions, which pose challenges to novices. Prior research has explored the enhancement of photo aesthetics post-capture through 2D manipulation techniques; however, these approaches offer limited search space for aesthetics. We introduce a pioneering method that employs 3D operations to simulate the conditions at the moment of capture retrospectively. Our approach extrapolates the input image and then reconstructs the 3D scene from the extrapolated image, followed by an optimization to identify camera parameters and image aspect ratios that yield the best 3D view with enhanced aesthetics. Comparative qualitative and quantitative assessments reveal that our method surpasses traditional 2D editing techniques with superior aesthetics.

Makeup Prior Models for 3D Facial Makeup Estimation and Applications

Mar 26, 2024

Abstract:In this work, we introduce two types of makeup prior models to extend existing 3D face prior models: PCA-based and StyleGAN2-based priors. The PCA-based prior model is a linear model that is easy to construct and is computationally efficient. However, it retains only low-frequency information. Conversely, the StyleGAN2-based model can represent high-frequency information with relatively higher computational cost than the PCA-based model. Although there is a trade-off between the two models, both are applicable to 3D facial makeup estimation and related applications. By leveraging makeup prior models and designing a makeup consistency module, we effectively address the challenges that previous methods faced in robustly estimating makeup, particularly in the context of handling self-occluded faces. In experiments, we demonstrate that our approach reduces computational costs by several orders of magnitude, achieving speeds up to 180 times faster. In addition, by improving the accuracy of the estimated makeup, we confirm that our methods are highly advantageous for various 3D facial makeup applications such as 3D makeup face reconstruction, user-friendly makeup editing, makeup transfer, and interpolation.

DiffBody: Diffusion-based Pose and Shape Editing of Human Images

Jan 08, 2024Abstract:Pose and body shape editing in a human image has received increasing attention. However, current methods often struggle with dataset biases and deteriorate realism and the person's identity when users make large edits. We propose a one-shot approach that enables large edits with identity preservation. To enable large edits, we fit a 3D body model, project the input image onto the 3D model, and change the body's pose and shape. Because this initial textured body model has artifacts due to occlusion and the inaccurate body shape, the rendered image undergoes a diffusion-based refinement, in which strong noise destroys body structure and identity whereas insufficient noise does not help. We thus propose an iterative refinement with weak noise, applied first for the whole body and then for the face. We further enhance the realism by fine-tuning text embeddings via self-supervised learning. Our quantitative and qualitative evaluations demonstrate that our method outperforms other existing methods across various datasets.

Masked-Attention Diffusion Guidance for Spatially Controlling Text-to-Image Generation

Aug 11, 2023

Abstract:Text-to-image synthesis has achieved high-quality results with recent advances in diffusion models. However, text input alone has high spatial ambiguity and limited user controllability. Most existing methods allow spatial control through additional visual guidance (e.g, sketches and semantic masks) but require additional training with annotated images. In this paper, we propose a method for spatially controlling text-to-image generation without further training of diffusion models. Our method is based on the insight that the cross-attention maps reflect the positional relationship between words and pixels. Our aim is to control the attention maps according to given semantic masks and text prompts. To this end, we first explore a simple approach of directly swapping the cross-attention maps with constant maps computed from the semantic regions. Moreover, we propose masked-attention guidance, which can generate images more faithful to semantic masks than the first approach. Masked-attention guidance indirectly controls attention to each word and pixel according to the semantic regions by manipulating noise images fed to diffusion models. Experiments show that our method enables more accurate spatial control than baselines qualitatively and quantitatively.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge