Yonatan Nakar

Emergent Dominance Hierarchies in Reinforcement Learning Agents

Feb 01, 2024

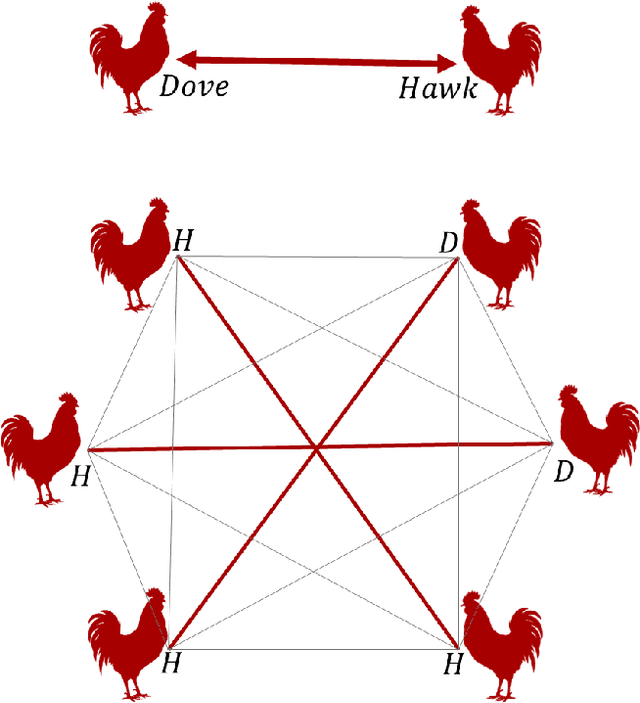

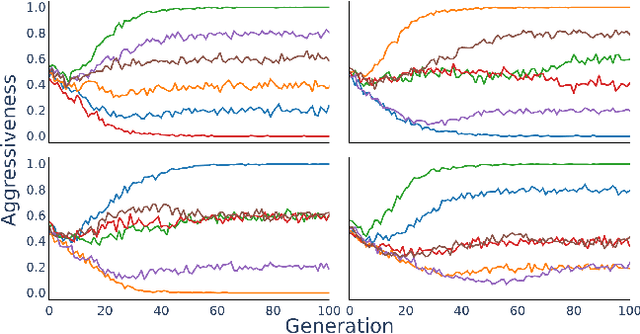

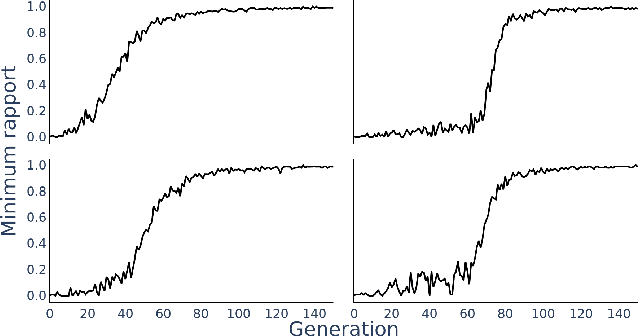

Abstract:Modern Reinforcement Learning (RL) algorithms are able to outperform humans in a wide variety of tasks. Multi-agent reinforcement learning (MARL) settings present additional challenges, and successful cooperation in mixed-motive groups of agents depends on a delicate balancing act between individual and group objectives. Social conventions and norms, often inspired by human institutions, are used as tools for striking this balance. In this paper, we examine a fundamental, well-studied social convention that underlies cooperation in both animal and human societies: dominance hierarchies. We adapt the ethological theory of dominance hierarchies to artificial agents, borrowing the established terminology and definitions with as few amendments as possible. We demonstrate that populations of RL agents, operating without explicit programming or intrinsic rewards, can invent, learn, enforce, and transmit a dominance hierarchy to new populations. The dominance hierarchies that emerge have a similar structure to those studied in chickens, mice, fish, and other species.

Stubborn: An Environment for Evaluating Stubbornness between Agents with Aligned Incentives

Apr 28, 2023

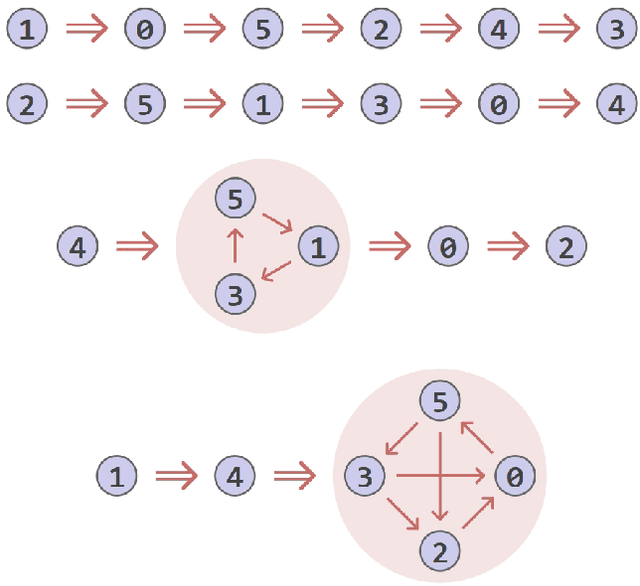

Abstract:Recent research in multi-agent reinforcement learning (MARL) has shown success in learning social behavior and cooperation. Social dilemmas between agents in mixed-sum settings have been studied extensively, but there is little research into social dilemmas in fullycooperative settings, where agents have no prospect of gaining reward at another agent's expense. While fully-aligned interests are conducive to cooperation between agents, they do not guarantee it. We propose a measure of "stubbornness" between agents that aims to capture the human social behavior from which it takes its name: a disagreement that is gradually escalating and potentially disastrous. We would like to promote research into the tendency of agents to be stubborn, the reactions of counterpart agents, and the resulting social dynamics. In this paper we present Stubborn, an environment for evaluating stubbornness between agents with fully-aligned incentives. In our preliminary results, the agents learn to use their partner's stubbornness as a signal for improving the choices that they make in the environment.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge