Ying Meng

Ensembles of Many Diverse Weak Defenses can be Strong: Defending Deep Neural Networks Against Adversarial Attacks

Jan 02, 2020

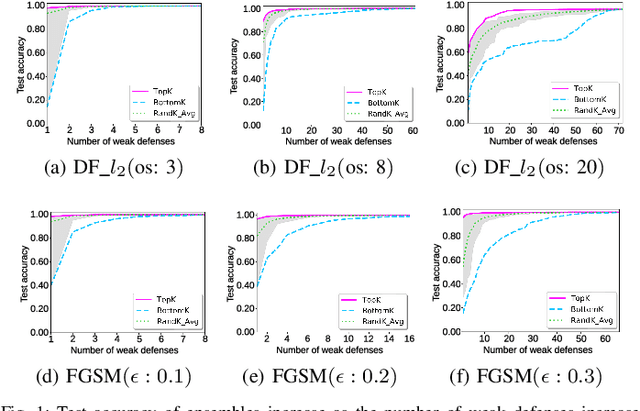

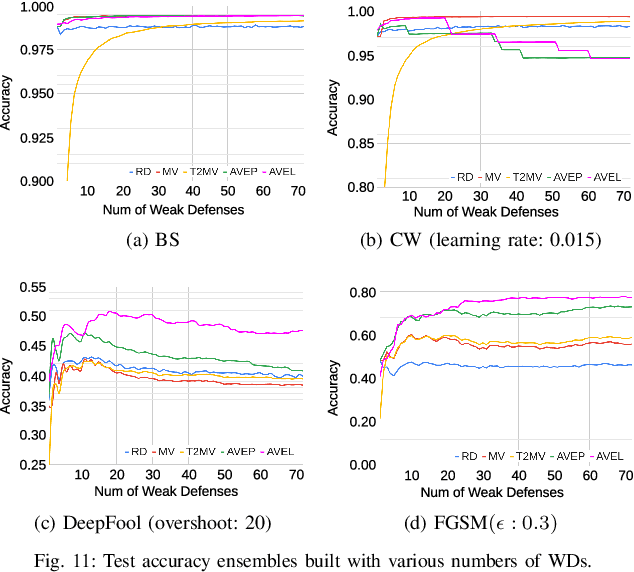

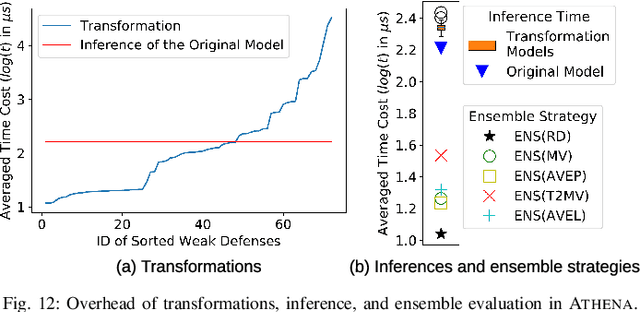

Abstract:Despite achieving state-of-the-art performance across many domains, machine learning systems are highly vulnerable to subtle adversarial perturbations. Although defense approaches have been proposed in recent years, many have been bypassed by even weak adversarial attacks. An early study~\cite{he2017adversarial} shows that ensembles created by combining multiple weak defenses (i.e., input data transformations) are still weak. We show that it is indeed possible to construct effective ensembles using weak defenses to block adversarial attacks. However, to do so requires a diverse set of such weak defenses. In this work, we propose Athena, an extensible framework for building effective defenses to adversarial attacks against machine learning systems. Here we conducted a comprehensive empirical study to evaluate several realizations of Athena. More specifically, we evaluated the effectiveness of 5 ensemble strategies with a diverse set of many weak defenses that comprise transforming the inputs (e.g., rotation, shifting, noising, denoising, and many more) before feeding them to target deep neural network (DNN) classifiers. We evaluate the effectiveness of the ensembles with adversarial examples generated by 9 various adversaries (i.e., FGSM, CW, etc.) in 4 threat models (i.e., zero-knowledge, black-box, gray-box, white-box) on MNIST. We also explain, via a comprehensive empirical study, why building defenses based on the idea of many diverse weak defenses works, when it is most effective, and what its inherent limitations and overhead are.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge