Yang Guang

Amazon ML Solutions Lab

Doppler-Resilient LEO Satellite OFDM Transmission with Affine Frequency Domain Pilot

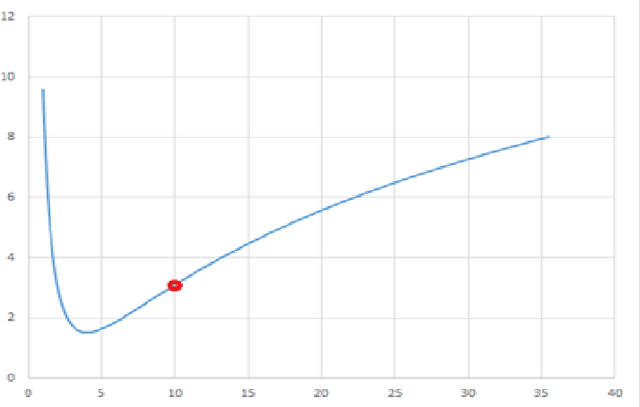

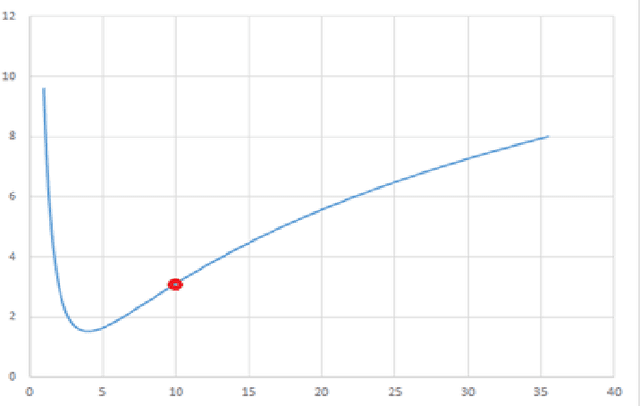

Jan 05, 2026Abstract:Orthogonal frequency division multiplexing (OFDM) based low Earth orbit (LEO) satellite communication system suffers from severe Doppler shifts, while {the Doppler-resilient affine frequency-division multiplexing (AFDM) transmission suffers from significantly high processing complexity in data detection}. In this paper, we explore the channel estimation gain of affine frequency (AF) domain pilot to enhance the OFDM transmission under high mobility. Specifically, we propose a novel AF domain pilot embedding scheme for satellite-ground downlink OFDM systems for capturing the channel characteristics. By exploiting the autoregressive (AR) property of adjacent channels, a long short-term memory (LSTM) based predictor is designed to replace conventional interpolation operation in OFDM channel estimation. Simulation results show that the proposed transmission scheme significantly outperforms conventional OFDM scheme in terms of bit error rate (BER) under high Doppler scenarios, thus paving a new way for the design of next generation non-terrestrial network (NTN) communication systems.

Explainability of Traditional and Deep Learning Models on Longitudinal Healthcare Records

Nov 22, 2022

Abstract:Recent advances in deep learning have led to interest in training deep learning models on longitudinal healthcare records to predict a range of medical events, with models demonstrating high predictive performance. Predictive performance is necessary but insufficient, however, with explanations and reasoning from models required to convince clinicians for sustained use. Rigorous evaluation of explainability is often missing, as comparisons between models (traditional versus deep) and various explainability methods have not been well-studied. Furthermore, ground truths needed to evaluate explainability can be highly subjective depending on the clinician's perspective. Our work is one of the first to evaluate explainability performance between and within traditional (XGBoost) and deep learning (LSTM with Attention) models on both a global and individual per-prediction level on longitudinal healthcare data. We compared explainability using three popular methods: 1) SHapley Additive exPlanations (SHAP), 2) Layer-Wise Relevance Propagation (LRP), and 3) Attention. These implementations were applied on synthetically generated datasets with designed ground-truths and a real-world medicare claims dataset. We showed that overall, LSTMs with SHAP or LRP provides superior explainability compared to XGBoost on both the global and local level, while LSTM with dot-product attention failed to produce reasonable ones. With the explosion of the volume of healthcare data and deep learning progress, the need to evaluate explainability will be pivotal towards successful adoption of deep learning models in healthcare settings.

Generalized XGBoost Method

Sep 15, 2021

Abstract:The XGBoost method has many advantages and is especially suitable for statistical analysis of big data, but its loss function is limited to convex functions. In many specific applications, a nonconvex loss function would be preferable. In this paper, we propose a generalized XGBoost method, which requires weaker loss function condition and involves more general loss functions, including convex loss functions and some non-convex loss functions. Furthermore, this generalized XGBoost method is extended to multivariate loss function to form a more generalized XGBoost method. This method is a multivariate regularized tree boosting method, which can model multiple parameters in most of the frequently-used parametric probability distributions to be fitted by predictor variables. Meanwhile, the related algorithms and some examples in non-life insurance pricing are given.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge