Xiaoning Jin

OffLight: An Offline Multi-Agent Reinforcement Learning Framework for Traffic Signal Control

Nov 25, 2024

Abstract:Efficient traffic control (TSC) is essential for urban mobility, but traditional systems struggle to handle the complexity of real-world traffic. Multi-agent Reinforcement Learning (MARL) offers adaptive solutions, but online MARL requires extensive interactions with the environment, making it costly and impractical. Offline MARL mitigates these challenges by using historical traffic data for training but faces significant difficulties with heterogeneous behavior policies in real-world datasets, where mixed-quality data complicates learning. We introduce OffLight, a novel offline MARL framework designed to handle heterogeneous behavior policies in TSC datasets. To improve learning efficiency, OffLight incorporates Importance Sampling (IS) to correct for distributional shifts and Return-Based Prioritized Sampling (RBPS) to focus on high-quality experiences. OffLight utilizes a Gaussian Mixture Variational Graph Autoencoder (GMM-VGAE) to capture the diverse distribution of behavior policies from local observations. Extensive experiments across real-world urban traffic scenarios show that OffLight outperforms existing offline RL methods, achieving up to a 7.8% reduction in average travel time and 11.2% decrease in queue length. Ablation studies confirm the effectiveness of OffLight's components in handling heterogeneous data and improving policy performance. These results highlight OffLight's scalability and potential to improve urban traffic management without the risks of online learning.

PyTSC: A Unified Platform for Multi-Agent Reinforcement Learning in Traffic Signal Control

Oct 23, 2024

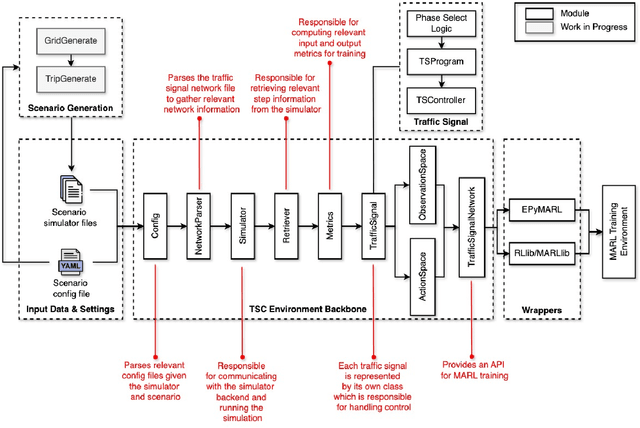

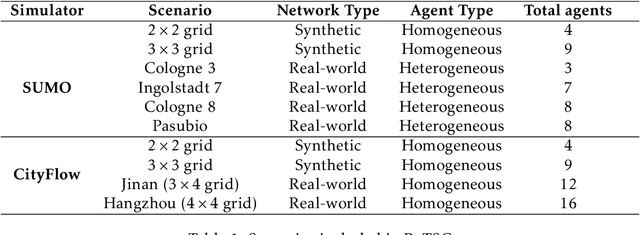

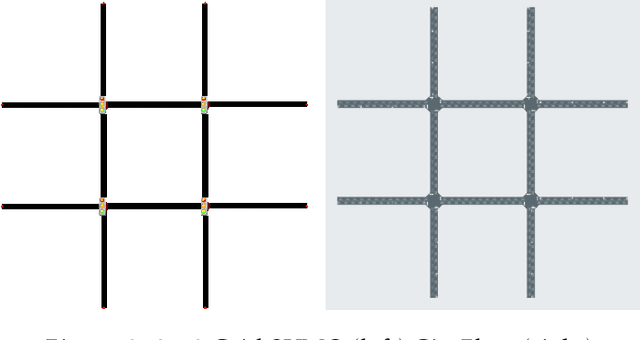

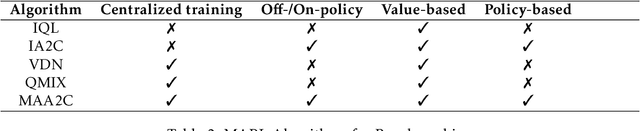

Abstract:Multi-Agent Reinforcement Learning (MARL) presents a promising approach for addressing the complexity of Traffic Signal Control (TSC) in urban environments. However, existing platforms for MARL-based TSC research face challenges such as slow simulation speeds and convoluted, difficult-to-maintain codebases. To address these limitations, we introduce PyTSC, a robust and flexible simulation environment that facilitates the training and evaluation of MARL algorithms for TSC. PyTSC integrates multiple simulators, such as SUMO and CityFlow, and offers a streamlined API, empowering researchers to explore a broad spectrum of MARL approaches efficiently. PyTSC accelerates experimentation and provides new opportunities for advancing intelligent traffic management systems in real-world applications.

Multi-Agent Reinforcement Learning Based on Representational Communication for Large-Scale Traffic Signal Control

Oct 03, 2023Abstract:Traffic signal control (TSC) is a challenging problem within intelligent transportation systems and has been tackled using multi-agent reinforcement learning (MARL). While centralized approaches are often infeasible for large-scale TSC problems, decentralized approaches provide scalability but introduce new challenges, such as partial observability. Communication plays a critical role in decentralized MARL, as agents must learn to exchange information using messages to better understand the system and achieve effective coordination. Deep MARL has been used to enable inter-agent communication by learning communication protocols in a differentiable manner. However, many deep MARL communication frameworks proposed for TSC allow agents to communicate with all other agents at all times, which can add to the existing noise in the system and degrade overall performance. In this study, we propose a communication-based MARL framework for large-scale TSC. Our framework allows each agent to learn a communication policy that dictates "which" part of the message is sent "to whom". In essence, our framework enables agents to selectively choose the recipients of their messages and exchange variable length messages with them. This results in a decentralized and flexible communication mechanism in which agents can effectively use the communication channel only when necessary. We designed two networks, a synthetic $4 \times 4$ grid network and a real-world network based on the Pasubio neighborhood in Bologna. Our framework achieved the lowest network congestion compared to related methods, with agents utilizing $\sim 47-65 \%$ of the communication channel. Ablation studies further demonstrated the effectiveness of the communication policies learned within our framework.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge