Wazir Ali

An Evaluation of Sindhi Word Embedding in Semantic Analogies and Downstream Tasks

Aug 28, 2024

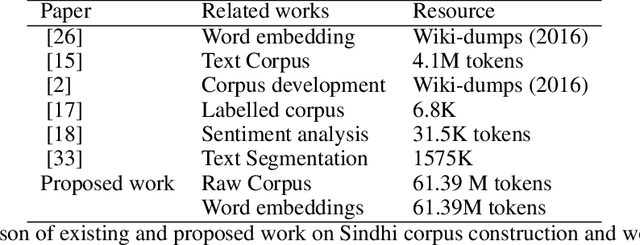

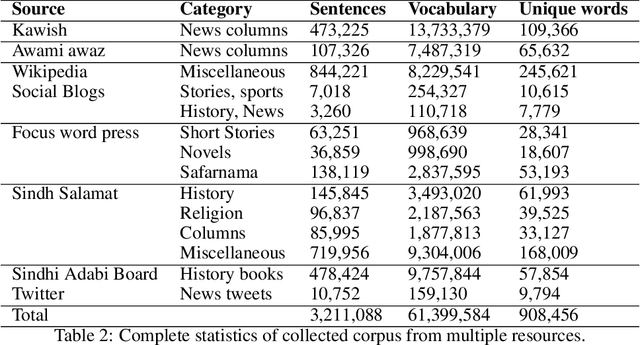

Abstract:In this paper, we propose a new word embedding based corpus consisting of more than 61 million words crawled from multiple web resources. We design a preprocessing pipeline for the filtration of unwanted text from crawled data. Afterwards, the cleaned vocabulary is fed to state-of-the-art continuous-bag-of-words, skip-gram, and GloVe word embedding algorithms. For the evaluation of pretrained embeddings, we use popular intrinsic and extrinsic evaluation approaches. The evaluation results reveal that continuous-bag-of-words and skip-gram perform better than GloVe and existing Sindhi fastText word embedding on both intrinsic and extrinsic evaluation approaches

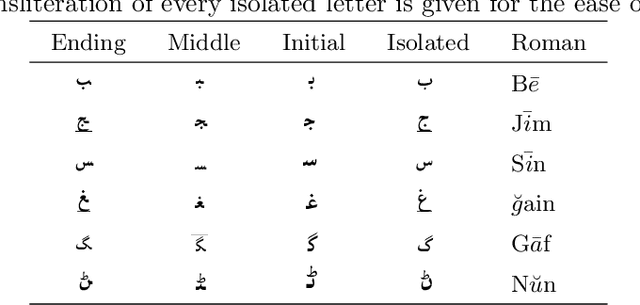

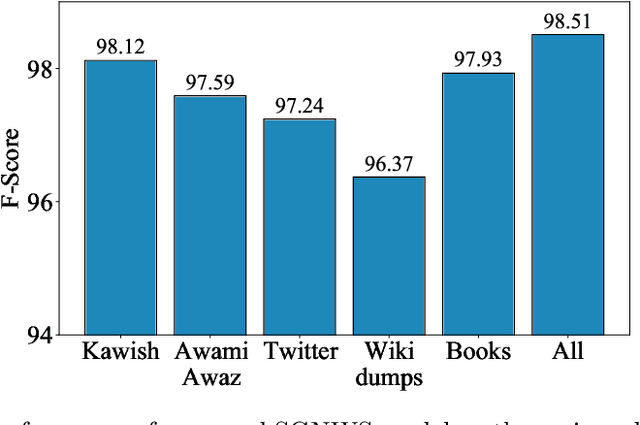

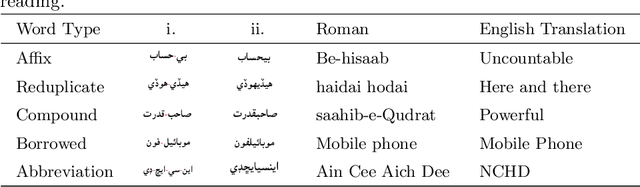

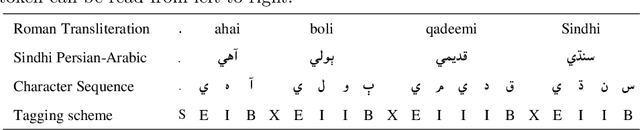

A Subword Guided Neural Word Segmentation Model for Sindhi

Dec 30, 2020

Abstract:Deep neural networks employ multiple processing layers for learning text representations to alleviate the burden of manual feature engineering in Natural Language Processing (NLP). Such text representations are widely used to extract features from unlabeled data. The word segmentation is a fundamental and inevitable prerequisite for many languages. Sindhi is an under-resourced language, whose segmentation is challenging as it exhibits space omission, space insertion issues, and lacks the labeled corpus for segmentation. In this paper, we investigate supervised Sindhi Word Segmentation (SWS) using unlabeled data with a Subword Guided Neural Word Segmenter (SGNWS) for Sindhi. In order to learn text representations, we incorporate subword representations to recurrent neural architecture to capture word information at morphemic-level, which takes advantage of Bidirectional Long-Short Term Memory (BiLSTM), self-attention mechanism, and Conditional Random Field (CRF). Our proposed SGNWS model achieves an F1 value of 98.51% without relying on feature engineering. The empirical results demonstrate the benefits of the proposed model over the existing Sindhi word segmenters.

A New Corpus for Low-Resourced Sindhi Language with Word Embeddings

Dec 02, 2019

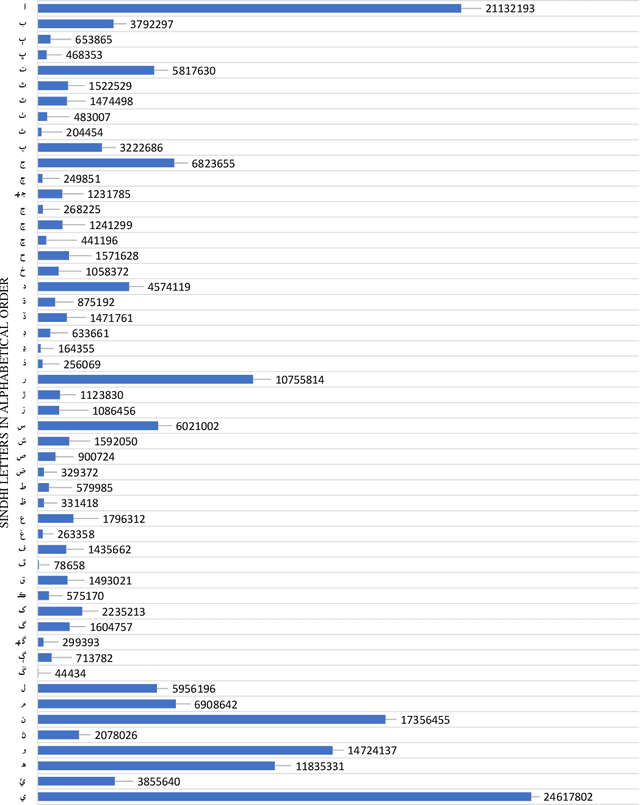

Abstract:Representing words and phrases into dense vectors of real numbers which encode semantic and syntactic properties is a vital constituent in natural language processing (NLP). The success of neural network (NN) models in NLP largely rely on such dense word representations learned on the large unlabeled corpus. Sindhi is one of the rich morphological language, spoken by large population in Pakistan and India lacks corpora which plays an essential role of a test-bed for generating word embeddings and developing language independent NLP systems. In this paper, a large corpus of more than 61 million words is developed for low-resourced Sindhi language for training neural word embeddings. The corpus is acquired from multiple web-resources using web-scrappy. Due to the unavailability of open source preprocessing tools for Sindhi, the prepossessing of such large corpus becomes a challenging problem specially cleaning of noisy data extracted from web resources. Therefore, a preprocessing pipeline is employed for the filtration of noisy text. Afterwards, the cleaned vocabulary is utilized for training Sindhi word embeddings with state-of-the-art GloVe, Skip-Gram (SG), and Continuous Bag of Words (CBoW) word2vec algorithms. The intrinsic evaluation approach of cosine similarity matrix and WordSim-353 are employed for the evaluation of generated Sindhi word embeddings. Moreover, we compare the proposed word embeddings with recently revealed Sindhi fastText (SdfastText) word representations. Our intrinsic evaluation results demonstrate the high quality of our generated Sindhi word embeddings using SG, CBoW, and GloVe as compare to SdfastText word representations.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge