Vikram Saraph

High-Resolution Convolutional Neural Networks on Homomorphically Encrypted Data via Sharding Ciphertexts

Jun 15, 2023

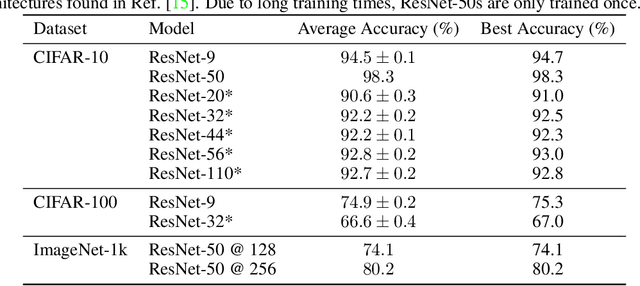

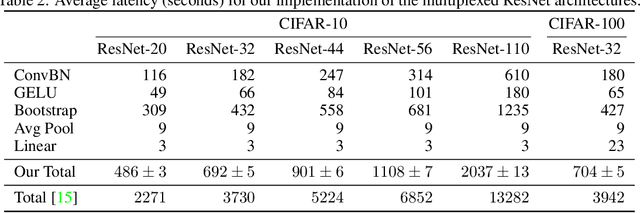

Abstract:Recently, Deep Convolutional Neural Networks (DCNNs) including the ResNet-20 architecture have been privately evaluated on encrypted, low-resolution data with the Residue-Number-System Cheon-Kim-Kim-Song (RNS-CKKS) homomorphic encryption scheme. We extend methods for evaluating DCNNs on images with larger dimensions and many channels, beyond what can be stored in single ciphertexts. Additionally, we simplify and improve the efficiency of the recently introduced multiplexed image format, demonstrating that homomorphic evaluation can work with standard, row-major matrix packing and results in encrypted inference time speedups by $4.6-6.5\times$. We also show how existing DCNN models can be regularized during the training process to further improve efficiency and accuracy. These techniques are applied to homomorphically evaluate a DCNN with high accuracy on the high-resolution ImageNet dataset for the first time, achieving $80.2\%$ top-1 accuracy. We also achieve the highest reported accuracy of homomorphically evaluated CNNs on the CIFAR-10 dataset of $98.3\%$.

CPR: Understanding and Improving Failure Tolerant Training for Deep Learning Recommendation with Partial Recovery

Nov 05, 2020

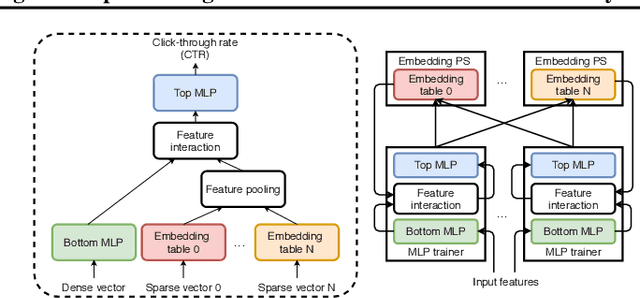

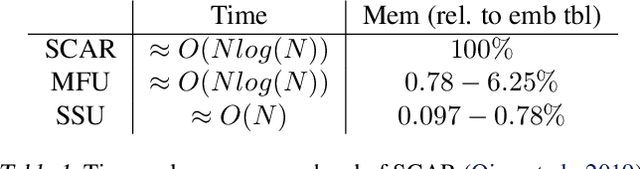

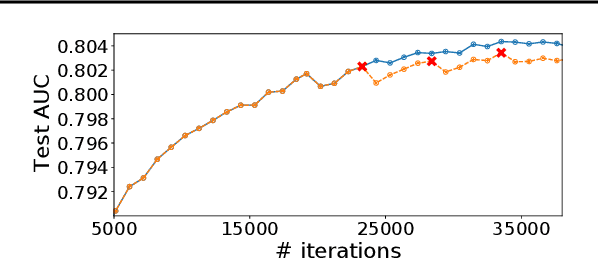

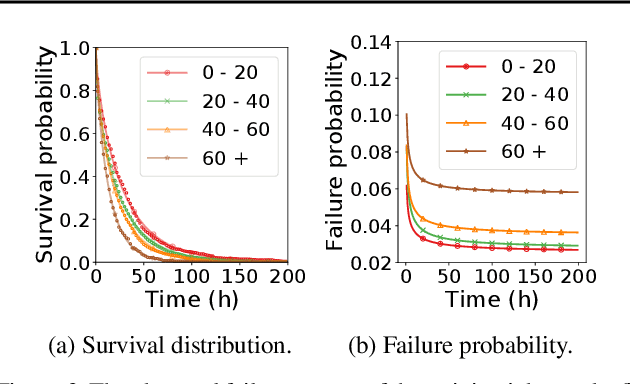

Abstract:The paper proposes and optimizes a partial recovery training system, CPR, for recommendation models. CPR relaxes the consistency requirement by enabling non-failed nodes to proceed without loading checkpoints when a node fails during training, improving failure-related overheads. The paper is the first to the extent of our knowledge to perform a data-driven, in-depth analysis of applying partial recovery to recommendation models and identified a trade-off between accuracy and performance. Motivated by the analysis, we present CPR, a partial recovery training system that can reduce the training time and maintain the desired level of model accuracy by (1) estimating the benefit of partial recovery, (2) selecting an appropriate checkpoint saving interval, and (3) prioritizing to save updates of more frequently accessed parameters. Two variants of CPR, CPR-MFU and CPR-SSU, reduce the checkpoint-related overhead from 8.2-8.5% to 0.53-0.68% compared to full recovery, on a configuration emulating the failure pattern and overhead of a production-scale cluster. While reducing overhead significantly, CPR achieves model quality on par with the more expensive full recovery scheme, training the state-of-the-art recommendation model using Criteo's Ads CTR dataset. Our preliminary results also suggest that CPR can speed up training on a real production-scale cluster, without notably degrading the accuracy.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge