Vikram Kulothungan

AI Regulation and Capitalist Growth: Balancing Innovation, Ethics, and Global Governance

Apr 01, 2025

Abstract:Artificial Intelligence (AI) is increasingly central to economic growth, promising new efficiencies and markets. This economic significance has sparked debate over AI regulation: do rules and oversight bolster long term growth by building trust and safeguarding the public, or do they constrain innovation and free enterprise? This paper examines the balance between AI regulation and capitalist ideals, focusing on how different approaches to AI data privacy can impact innovation in AI-driven applications. The central question is whether AI regulation enhances or inhibits growth in a capitalist economy. Our analysis synthesizes historical precedents, the current U.S. regulatory landscape, economic projections, legal challenges, and case studies of recent AI policies. We discuss that carefully calibrated AI data privacy regulations-balancing innovation incentives with the public interest can foster sustainable growth by building trust and ensuring responsible data use, while excessive regulation may risk stifling innovation and entrenching incumbents.

Towards Adaptive AI Governance: Comparative Insights from the U.S., EU, and Asia

Apr 01, 2025

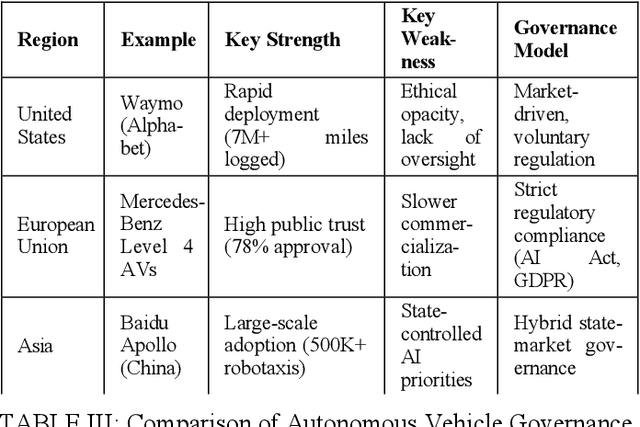

Abstract:Artificial intelligence (AI) trends vary significantly across global regions, shaping the trajectory of innovation, regulation, and societal impact. This variation influences how different regions approach AI development, balancing technological progress with ethical and regulatory considerations. This study conducts a comparative analysis of AI trends in the United States (US), the European Union (EU), and Asia, focusing on three key dimensions: generative AI, ethical oversight, and industrial applications. The US prioritizes market-driven innovation with minimal regulatory constraints, the EU enforces a precautionary risk-based framework emphasizing ethical safeguards, and Asia employs state-guided AI strategies that balance rapid deployment with regulatory oversight. Although these approaches reflect different economic models and policy priorities, their divergence poses challenges to international collaboration, regulatory harmonization, and the development of global AI standards. To address these challenges, this paper synthesizes regional strengths to propose an adaptive AI governance framework that integrates risk-tiered oversight, innovation accelerators, and strategic alignment mechanisms. By bridging governance gaps, this study offers actionable insights for fostering responsible AI development while ensuring a balance between technological progress, ethical imperatives, and regulatory coherence.

A Blockchain-Enabled Approach to Cross-Border Compliance and Trust

Jan 15, 2025

Abstract:As artificial intelligence (AI) systems become increasingly integral to critical infrastructure and global operations, the need for a unified, trustworthy governance framework is more urgent that ever. This paper proposes a novel approach to AI governance, utilizing blockchain and distributed ledger technologies (DLT) to establish a decentralized, globally recognized framework that ensures security, privacy, and trustworthiness of AI systems across borders. The paper presents specific implementation scenarios within the financial sector, outlines a phased deployment timeline over the next decade, and addresses potential challenges with solutions grounded in current research. By synthesizing advancements in blockchain, AI ethics, and cybersecurity, this paper offers a comprehensive roadmap for a decentralized AI governance framework capable of adapting to the complex and evolving landscape of global AI regulation.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge