Vijendran V Gopalan

Exploring the role of Input and Output Layers of a Deep Neural Network in Adversarial Defense

Jun 02, 2020

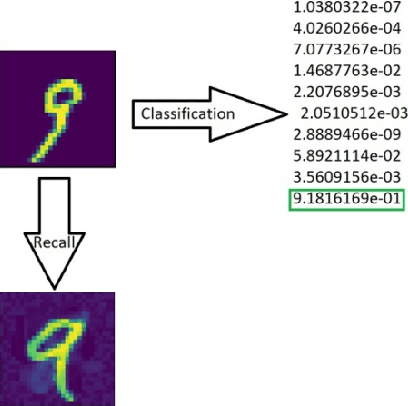

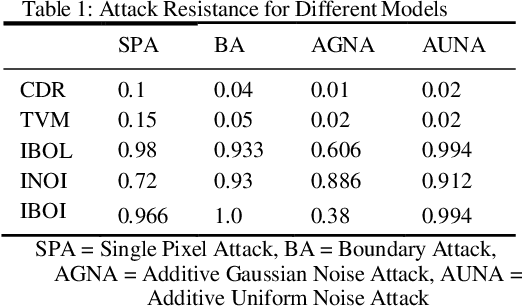

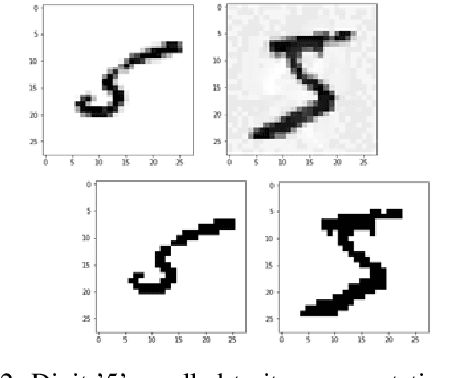

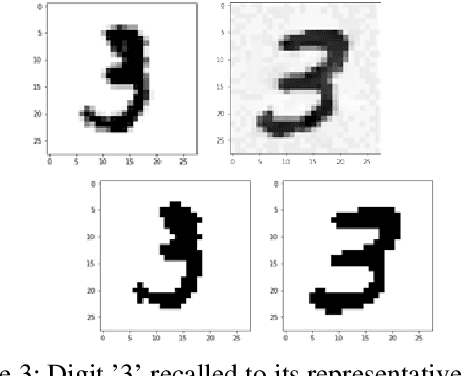

Abstract:Deep neural networks are learning models having achieved state of the art performance in many fields like prediction, computer vision, language processing and so on. However, it has been shown that certain inputs exist which would not trick a human normally, but may mislead the model completely. These inputs are known as adversarial inputs. These inputs pose a high security threat when such models are used in real world applications. In this work, we have analyzed the resistance of three different classes of fully connected dense networks against the rarely tested non-gradient based adversarial attacks. These classes are created by manipulating the input and output layers. We have proven empirically that owing to certain characteristics of the network, they provide a high robustness against these attacks, and can be used in fine tuning other models to increase defense against adversarial attacks.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge