Victor Nascimento Ribeiro

Efficient Video-Based ALPR System Using YOLO and Visual Rhythm

Jan 08, 2025

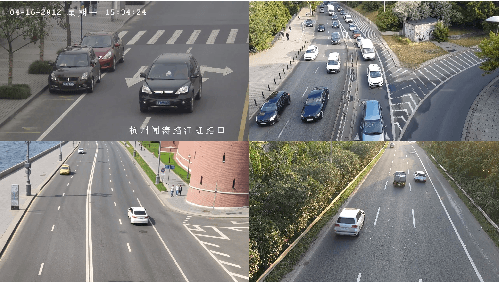

Abstract:Automatic License Plate Recognition (ALPR) involves extracting vehicle license plate information from image or a video capture. These systems have gained popularity due to the wide availability of low-cost surveillance cameras and advances in Deep Learning. Typically, video-based ALPR systems rely on multiple frames to detect the vehicle and recognize the license plates. Therefore, we propose a system capable of extracting exactly one frame per vehicle and recognizing its license plate characters from this singular image using an Optical Character Recognition (OCR) model. Early experiments show that this methodology is viable.

Efficient License Plate Recognition in Videos Using Visual Rhythm and Accumulative Line Analysis

Jan 08, 2025

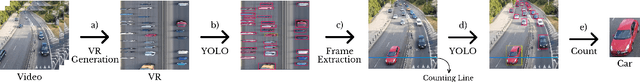

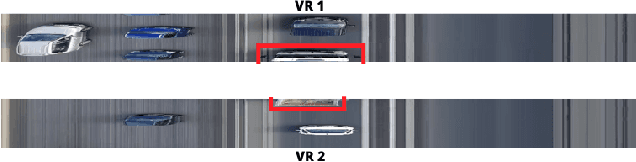

Abstract:Video-based Automatic License Plate Recognition (ALPR) involves extracting vehicle license plate text information from video captures. Traditional systems typically rely heavily on high-end computing resources and utilize multiple frames to recognize license plates, leading to increased computational overhead. In this paper, we propose two methods capable of efficiently extracting exactly one frame per vehicle and recognizing its license plate characters from this single image, thus significantly reducing computational demands. The first method uses Visual Rhythm (VR) to generate time-spatial images from videos, while the second employs Accumulative Line Analysis (ALA), a novel algorithm based on single-line video processing for real-time operation. Both methods leverage YOLO for license plate detection within the frame and a Convolutional Neural Network (CNN) for Optical Character Recognition (OCR) to extract textual information. Experiments on real videos demonstrate that the proposed methods achieve results comparable to traditional frame-by-frame approaches, with processing speeds three times faster.

Combining YOLO and Visual Rhythm for Vehicle Counting

Jan 08, 2025

Abstract:Video-based vehicle detection and counting play a critical role in managing transport infrastructure. Traditional image-based counting methods usually involve two main steps: initial detection and subsequent tracking, which are applied to all video frames, leading to a significant increase in computational complexity. To address this issue, this work presents an alternative and more efficient method for vehicle detection and counting. The proposed approach eliminates the need for a tracking step and focuses solely on detecting vehicles in key video frames, thereby increasing its efficiency. To achieve this, we developed a system that combines YOLO, for vehicle detection, with Visual Rhythm, a way to create time-spatial images that allows us to focus on frames that contain useful information. Additionally, this method can be used for counting in any application involving unidirectional moving targets to be detected and identified. Experimental analysis using real videos shows that the proposed method achieves mean counting accuracy around 99.15% over a set of videos, with a processing speed three times faster than tracking based approaches.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge