Verónica Rodríguez Tribaldos

Deep Learning on Real Geophysical Data: A Case Study for Distributed Acoustic Sensing Research

Oct 15, 2020

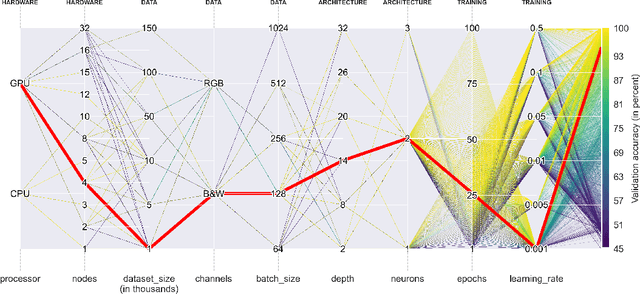

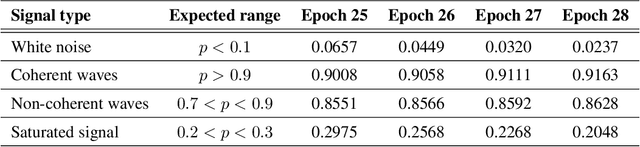

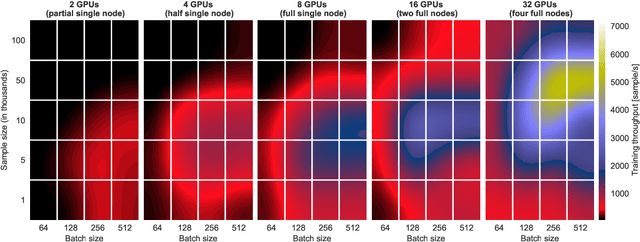

Abstract:Deep Learning approaches for real, large, and complex scientific data sets can be very challenging to design. In this work, we present a complete search for a finely-tuned and efficiently scaled deep learning classifier to identify usable energy from seismic data acquired using Distributed Acoustic Sensing (DAS). While using only a subset of labeled images during training, we were able to identify suitable models that can be accurately generalized to unknown signal patterns. We show that by using 16 times more GPUs, we can increase the training speed by more than two orders of magnitude on a 50,000-image data set.

Deep Learning for Surface Wave Identification in Distributed Acoustic Sensing Data

Oct 15, 2020

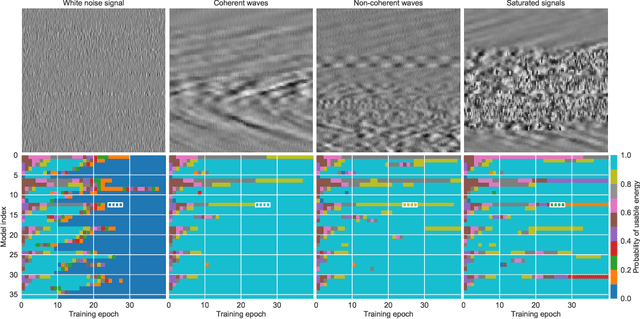

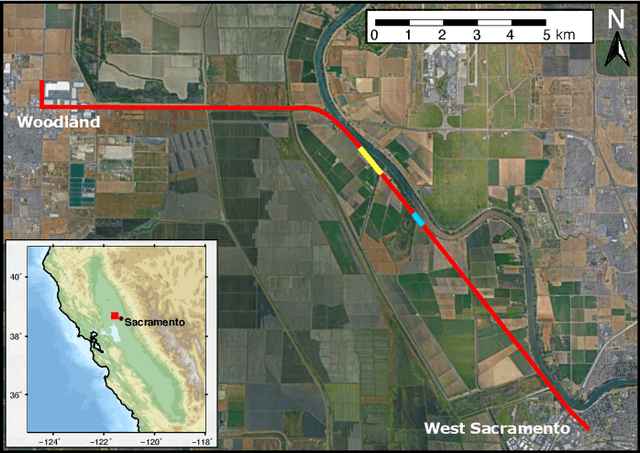

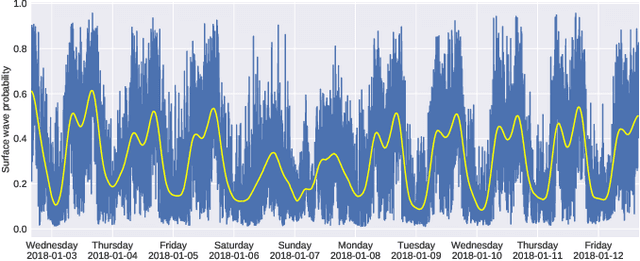

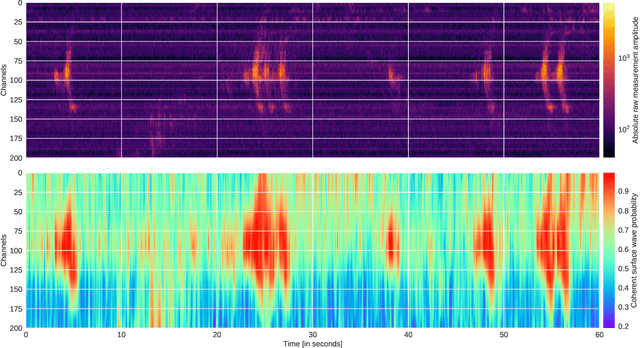

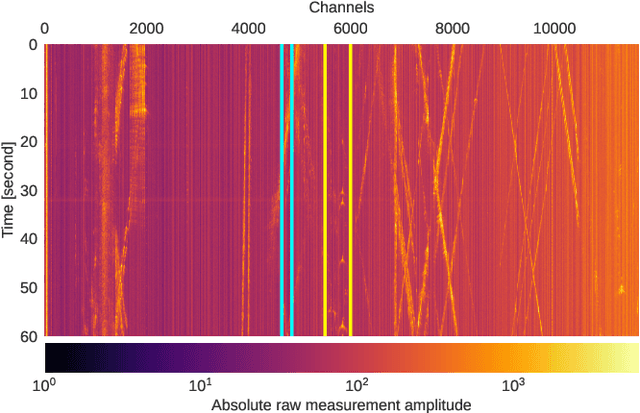

Abstract:Moving loads such as cars and trains are very useful sources of seismic waves, which can be analyzed to retrieve information on the seismic velocity of subsurface materials using the techniques of ambient noise seismology. This information is valuable for a variety of applications such as geotechnical characterization of the near-surface, seismic hazard evaluation, and groundwater monitoring. However, for such processes to converge quickly, data segments with appropriate noise energy should be selected. Distributed Acoustic Sensing (DAS) is a novel sensing technique that enables acquisition of these data at very high spatial and temporal resolution for tens of kilometers. One major challenge when utilizing the DAS technology is the large volume of data that is produced, thereby presenting a significant Big Data challenge to find regions of useful energy. In this work, we present a highly scalable and efficient approach to process real, complex DAS data by integrating physics knowledge acquired during a data exploration phase followed by deep supervised learning to identify "useful" coherent surface waves generated by anthropogenic activity, a class of seismic waves that is abundant on these recordings and is useful for geophysical imaging. Data exploration and training were done on 130~Gigabytes (GB) of DAS measurements. Using parallel computing, we were able to do inference on an additional 170~GB of data (or the equivalent of 10 days' worth of recordings) in less than 30 minutes. Our method provides interpretable patterns describing the interaction of ground-based human activities with the buried sensors.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge