Tulasi Menon

QnAMaker: Data to Bot in 2 Minutes

Mar 19, 2020

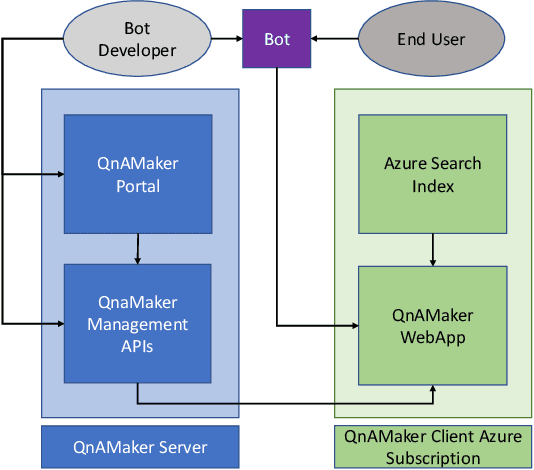

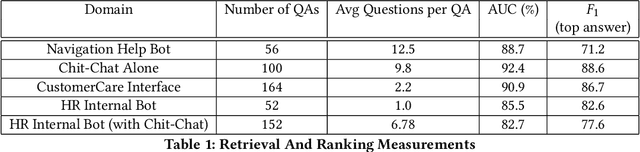

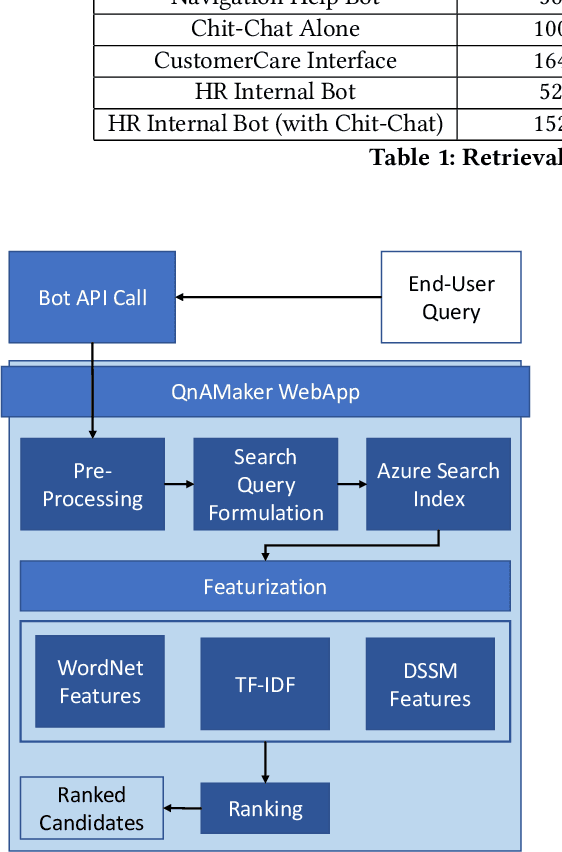

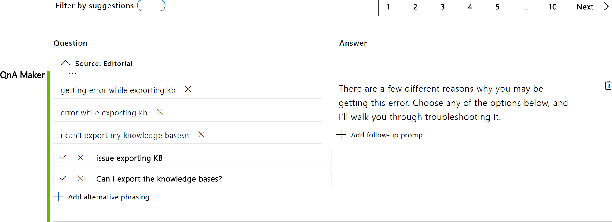

Abstract:Having a bot for seamless conversations is a much-desired feature that products and services today seek for their websites and mobile apps. These bots help reduce traffic received by human support significantly by handling frequent and directly answerable known questions. Many such services have huge reference documents such as FAQ pages, which makes it hard for users to browse through this data. A conversation layer over such raw data can lower traffic to human support by a great margin. We demonstrate QnAMaker, a service that creates a conversational layer over semi-structured data such as FAQ pages, product manuals, and support documents. QnAMaker is the popular choice for Extraction and Question-Answering as a service and is used by over 15,000 bots in production. It is also used by search interfaces and not just bots.

A Trustworthy, Responsible and Interpretable System to Handle Chit Chat in Conversational Bots

Nov 23, 2018

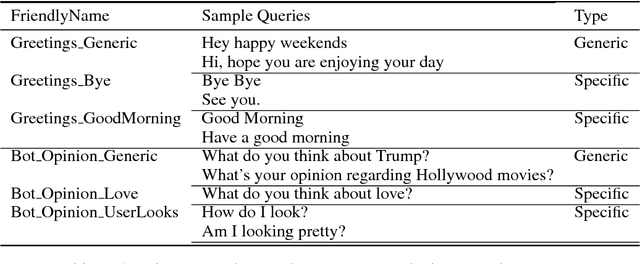

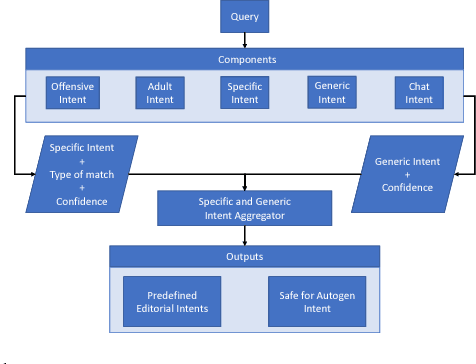

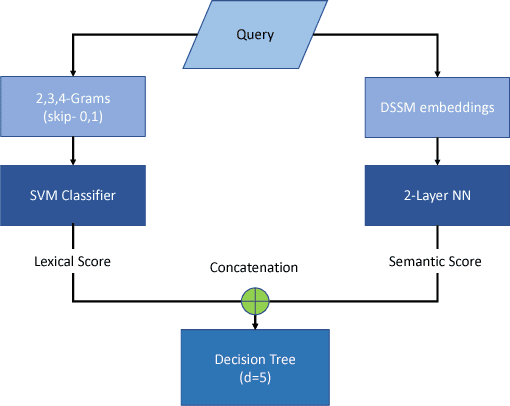

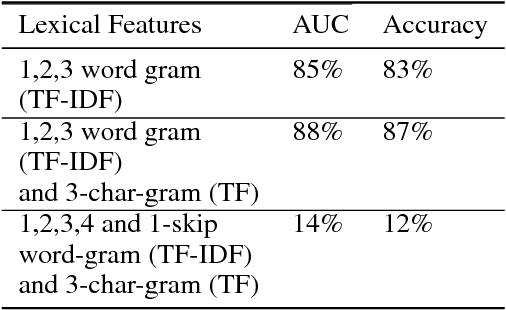

Abstract:Most often, chat-bots are built to solve the purpose of a search engine or a human assistant: Their primary goal is to provide information to the user or help them complete a task. However, these chat-bots are incapable of responding to unscripted queries like "Hi, what's up", "What's your favourite food". Human evaluation judgments show that 4 humans come to a consensus on the intent of a given query which is from chat domain only 77% of the time, thus making it evident how non-trivial this task is. In our work, we show why it is difficult to break the chitchat space into clearly defined intents. We propose a system to handle this task in chat-bots, keeping in mind scalability, interpretability, appropriateness, trustworthiness, relevance and coverage. Our work introduces a pipeline for query understanding in chitchat using hierarchical intents as well as a way to use seq-seq auto-generation models in professional bots. We explore an interpretable model for chat domain detection and also show how various components such as adult/offensive classification, grammars/regex patterns, curated personality based responses, generic guided evasive responses and response generation models can be combined in a scalable way to solve this problem.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge