Tianling Yang

Studying Up Machine Learning Data: Why Talk About Bias When We Mean Power?

Sep 16, 2021Abstract:Research in machine learning (ML) has primarily argued that models trained on incomplete or biased datasets can lead to discriminatory outputs. In this commentary, we propose moving the research focus beyond bias-oriented framings by adopting a power-aware perspective to "study up" ML datasets. This means accounting for historical inequities, labor conditions, and epistemological standpoints inscribed in data. We draw on HCI and CSCW work to support our argument, critically analyze previous research, and point at two co-existing lines of work within our community -- one bias-oriented, the other power-aware. This way, we highlight the need for dialogue and cooperation in three areas: data quality, data work, and data documentation. In the first area, we argue that reducing societal problems to "bias" misses the context-based nature of data. In the second one, we highlight the corporate forces and market imperatives involved in the labor of data workers that subsequently shape ML datasets. Finally, we propose expanding current transparency-oriented efforts in dataset documentation to reflect the social contexts of data design and production.

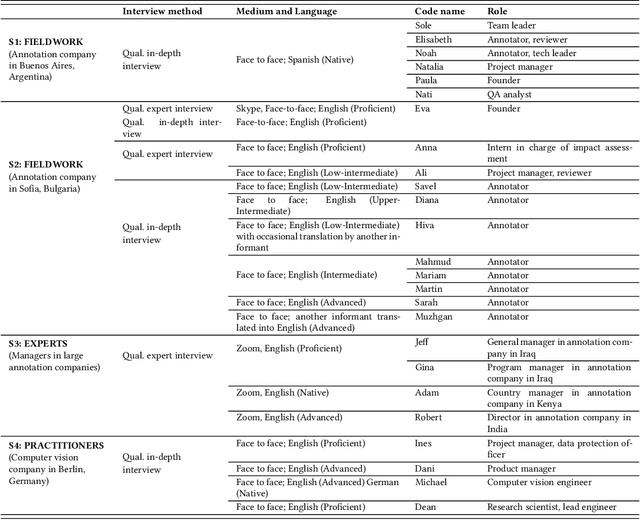

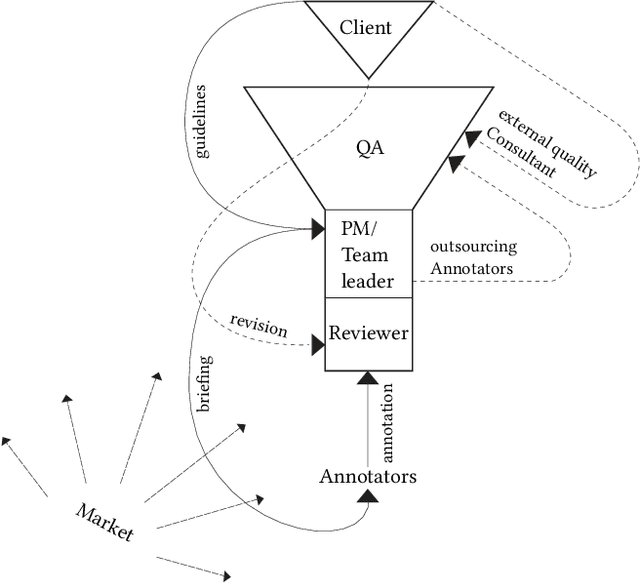

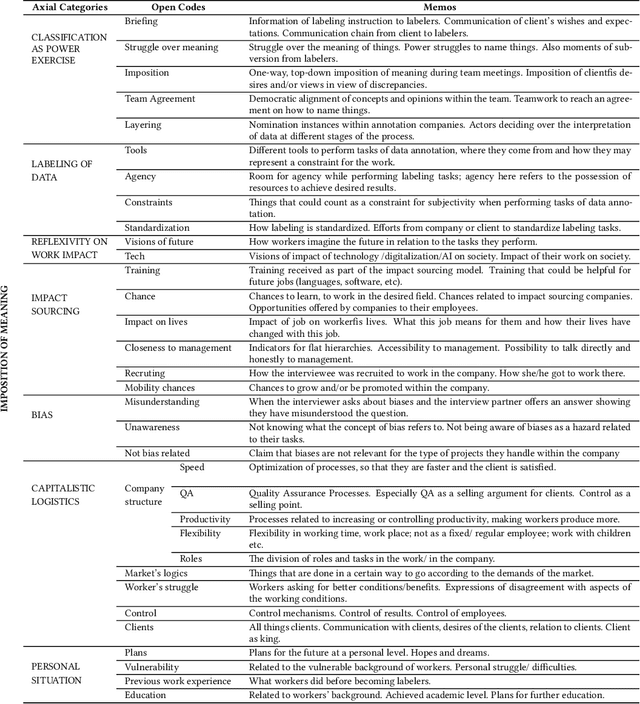

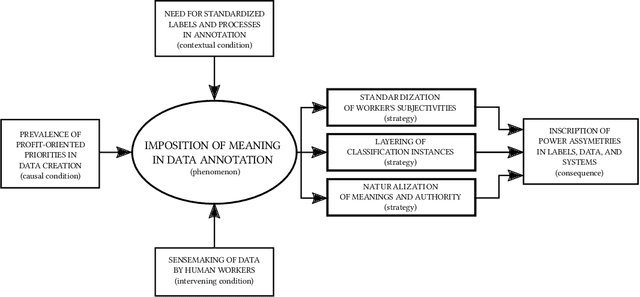

Between Subjectivity and Imposition: Power Dynamics in Data Annotation for Computer Vision

Jul 30, 2020

Abstract:The interpretation of data is fundamental to machine learning. This paper investigates practices of image data annotation as performed in industrial contexts. We define data annotation as a sense-making practice, where annotators assign meaning to data through the use of labels. Previous human-centered investigations have largely focused on annotators subjectivity as a major cause for biased labels. We propose a wider view on this issue: guided by constructivist grounded theory, we conducted several weeks of fieldwork at two annotation companies. We analyzed which structures, power relations, and naturalized impositions shape the interpretation of data. Our results show that the work of annotators is profoundly informed by the interests, values, and priorities of other actors above their station. Arbitrary classifications are vertically imposed on annotators, and through them, on data. This imposition is largely naturalized. Assigning meaning to data is often presented as a technical matter. This paper shows it is, in fact, an exercise of power with multiple implications for individuals and society.

Add to Chrome

Add to Chrome Add to Firefox

Add to Firefox Add to Edge

Add to Edge